#50 AI in hybrid warfare. Age verification. Cognitive load, cognitive debt, technical debt.

You're going to get really tired of reading about this stuff and AI.

“Cognitive load”

It is the mental effort of, at best, processing information and at worst coordinating an entire project: of course you don’t do everything, but you’re the idiot who has everything in his head and takes responsibility for things turning out well.

Because someone has to do it.

By using AI to solve problems, we externalize part of that effort: the model thinks, we validate.

The problem is that, over time, the mental muscle tends to atrophy from lack of use.

“Cognitive debt”

It is the cost inherent to the atrophy of that mental muscle.

Every time we delegate a mental task to an AI —summarizing, reasoning, structuring— instead of doing it ourselves, we accumulate a debt: the capacity we don’t practice today will cost us to recover tomorrow.

More than loss, I prefer to call it erosion.

“Technical debt”

Original concept from software development (including public, tested libraries in your project to finish faster, without taking risks, without reinventing a wheel that is already spinning in a million places).

In the context of AI use, it refers to “asking for” code, complex documents, or workflows without external knowledge of the subject and its context.

When the AI feeds you hallucinations, the script stops working or gives you an error you don’t understand, the user ends up naked because they never mastered the original process.

As the use of AI keeps spreading, all these concepts gain importance and popularity:

Today we’ll spend a few words talking about cognitive load with this article in which I particularly saw myself very reflected: the feeling of being exhausted by mid-morning because I’ve gotten myself into three heavy messes at the same time: I prompted the first with a testament of almost a thousand words, I’m polishing and optimizing the second and the third, and before seeing the new output of the second I’ve already fallen into a different —and of course, better— way of framing the first: AI is wonderful for reaching places you never thought you’d reach alone, but chasing the rainbow has a very obvious cost.

There’s a ubiquitous mantra these days: “AI won’t take your job: someone who masters it will.”

Maybe.

This task-based approach has pretty short legs, but we’ll talk about that another time.

What’s certain is that micro-firms like mine are multiplying our possibilities month by month.

But it’s obvious to me that both small and large organizations need to systematize efforts so we don’t end up working twice as much as before vibecoding the same things, burning ourselves reviewing twin deep-research outputs, trying to achieve checkmate across all skills, and ingesting, summarizing, and extracting insights from the latest guidelines published by the authority of Sebastopol.

And then there’s the issue that by optimizing Claude in that way, you’re handing over all your mojo.

And that one: that is the real issue.

But that story will be told another time.

You’re reading ZERO PARTY DATA. The newsletter about current affairs and tech law by Jorge García Herrero and Darío López Rincón.

In the spare time this newsletter leaves us, we solve tricky messes related to personal data protection regulation and artificial intelligence. If you’ve got one of those, give us a little wave with your hand. Or contact us by email at jgh(at)jorgegarciaherrero.com

🗞️News from Data world 🌍

.- The weekend gossip kept Dario Amodei as the protagonist: he stood before the US Government and held as the final frontier of his AI Ethics the idea of subjecting mass surveillance capabilities and full autonomy for lethal purposes to safeguards. In response, Trump signed with OpenAI and placed Anthropic on a kind of blacklist for contracts with the US administration.

The latest episode of this confrontation has been the massive exodus from ChatGPT to Claude which has placed the latter in the number-one position among downloaded apps in the main markets.

.- Lukasz Olejnik explains the digital side of the “hybrid attack” on Iran (Israel hacked “BadeSaba,” a popular Iranian prayer app, to deliver to a large part of the population the kind of propaganda that in old movies used to be dropped as leaflets from airplanes.

All of this happened before the Ayatollahs cut off internet access, of course.

I was saying that Lukasz not only explains it but also puffs out his chest for having predicted it in his book “Disinformation”.

.- Dell Cameron in Wired: Data broker security breaches have cost twenty billion euros in the USA.

The social side of this news: a report from the Democrats’ Joint Economic Committee quantifies the damages caused by identity theft and holds four major breaches in the data broker ecosystem responsible.

The article revisits a previous investigation about opt-out pages from these companies that were deliberately hidden (with embedded “noindex” instructions for search engines) and links them with new political pressure: audits, requests for information to specific brokers.

The legal-technical side: the “noindex” mechanism is a pattern of violation of the duty of accountability that is very easily quantifiable: simply crawling these indexes is enough to detect (and document) the concrete intention to fail to comply with the GDPR.

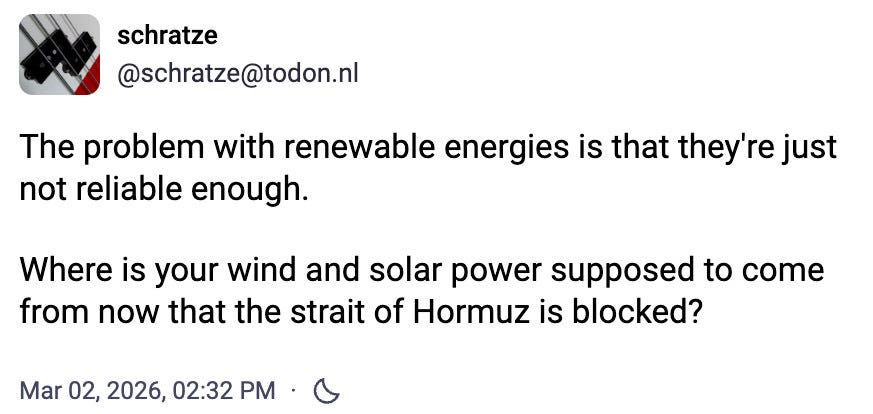

.- One of the most disturbing moments this bald guy has had this week (and there haven’t been few): The documents revealed by Meta in the context of litigation in New Mexico show that the company knew its algorithm suggested four times more underage accounts to potentially dangerous adults (inclined to groom them and sextort them).

Almost one out of four of those adults (attention: one out of four (!!)) actually tried (the data comes from the documents).

Did Meta fix the algorithm as a consequence of this data? No.

It’s engagement, my friend!!

.- India continues to be one of the best third countries for international transfer. Increasingly so: “The Indian state of Maharashtra is developing an artificial intelligence tool that uses ‘accent, tone, and word choice’ to identify and deport Bangladeshi Muslims and Rohingya displaced from Myanmar.”

That report on relevant regulation commissioned by the EDPS about China, Russia, and India itself is starting to become outdated.

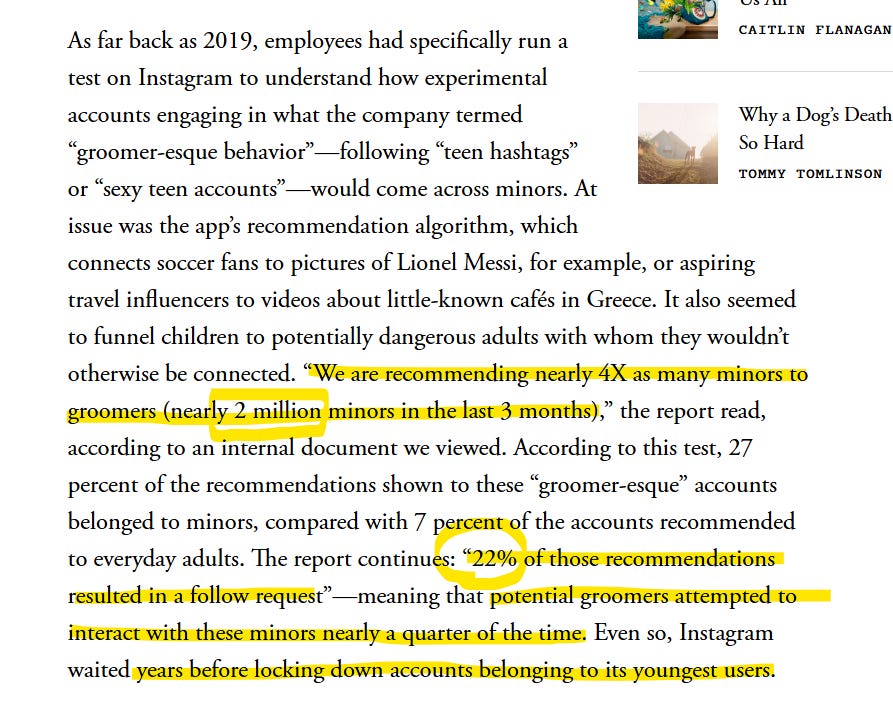

.- “Just the tip.” Very interesting news about the duality between the DSA and the big trial in the United States with META and TikTok in the middle. The ability for juicy responsibility documents about the entire addictive ecosystem created to come to light.

For example, that they would only authorize implementing some form of screen-time control if it didn’t reduce total user usage by more than 5%.

Here they show you the nice reasonable-screen-use policy presented by Compliance, but there they have to produce the internal document from the top leadership.

Age verification

We have so much on this topic this week that we’re dedicating a section to it.

.- We start with this comprehensive article by Waydell D. Carvalho and Michelle Donelan which gathers in one place all the problems posed by the seemingly good idea of imposing age verification on platforms.

.- Discord now tells us it is delaying the implementation of verification through facial age estimation to an undetermined date around mid-year. They put on the table that they understand people have privacy concerns, but they will try to be more transparent. It may not have helped that it was never said which legal basis for processing is used, that at the provider level amusing options appear such as “verification through fingerprint associated with the email address,” or the point they keep hinting at about profiling that already allows them to infer it.

You should never bet the farm saying profiling is less invasive than facial estimation without enough information about both, but three questions: why do you need the second if you have the first?, do we have two age-verification systems: the undeclared and undisclosed one for 90% of people, and the facial one they wanted to introduce?, and doesn’t anyone in legal review these posts from the wild CEO explaining things?

The finishing touch is that they acknowledge they weren’t convinced by Persona as a specific provider + that the information never left the device. That might not be very good news for LinkedIn or Reddit, by comparison. Although Bluesky points to doubts about Discord’s claims.

.- Last but not least: Joint open letter signed by a bunch of scientists against… can you guess it? Age verification.

The letter distinguishes verification, estimation, and age inference, and warns of technical harms of all kinds if access control is deployed at internet scale without much care.

Summary of the risks (hold on tight): easy bypass (VPNs, borrowed credentials, deepfakes); possible increase in malware/scams due to migration to alternative services; need for complex global trust infrastructures; privacy invasion through biometrics and behavioral control; biases and errors, among others.

But the main argument is irreversibility: once universal access control is installed, the slide toward reuse for censorship or other shady purposes is far more likely than its dismantling; it is a governance risk, not only a privacy one.

📄High density docs for data junkies☕️

.- This paper by Theodore Christakis explaining all the data that the most widespread AI models sneak from you.

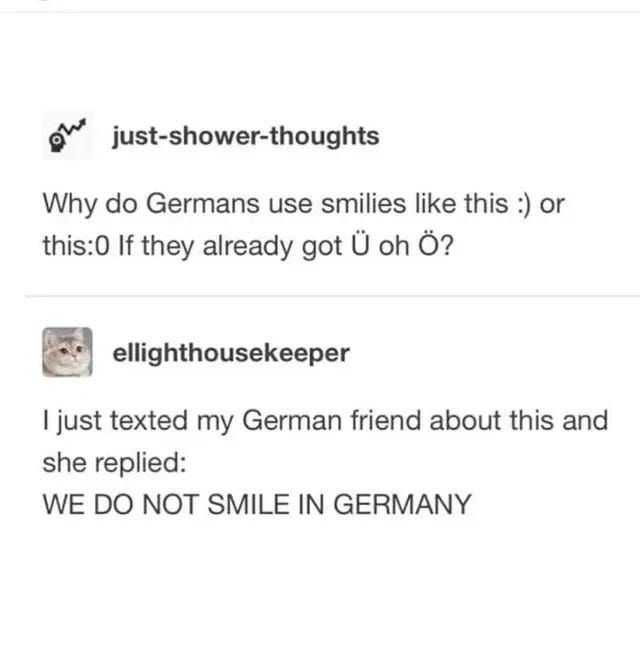

💀Death by Meme🤣

🤖NoRobots.txt or The AI Stuff + 📃The paper of the week

.- Hard to read, but fascinating: The Dead Law Theory: The Perils of Simulated Interpretation.

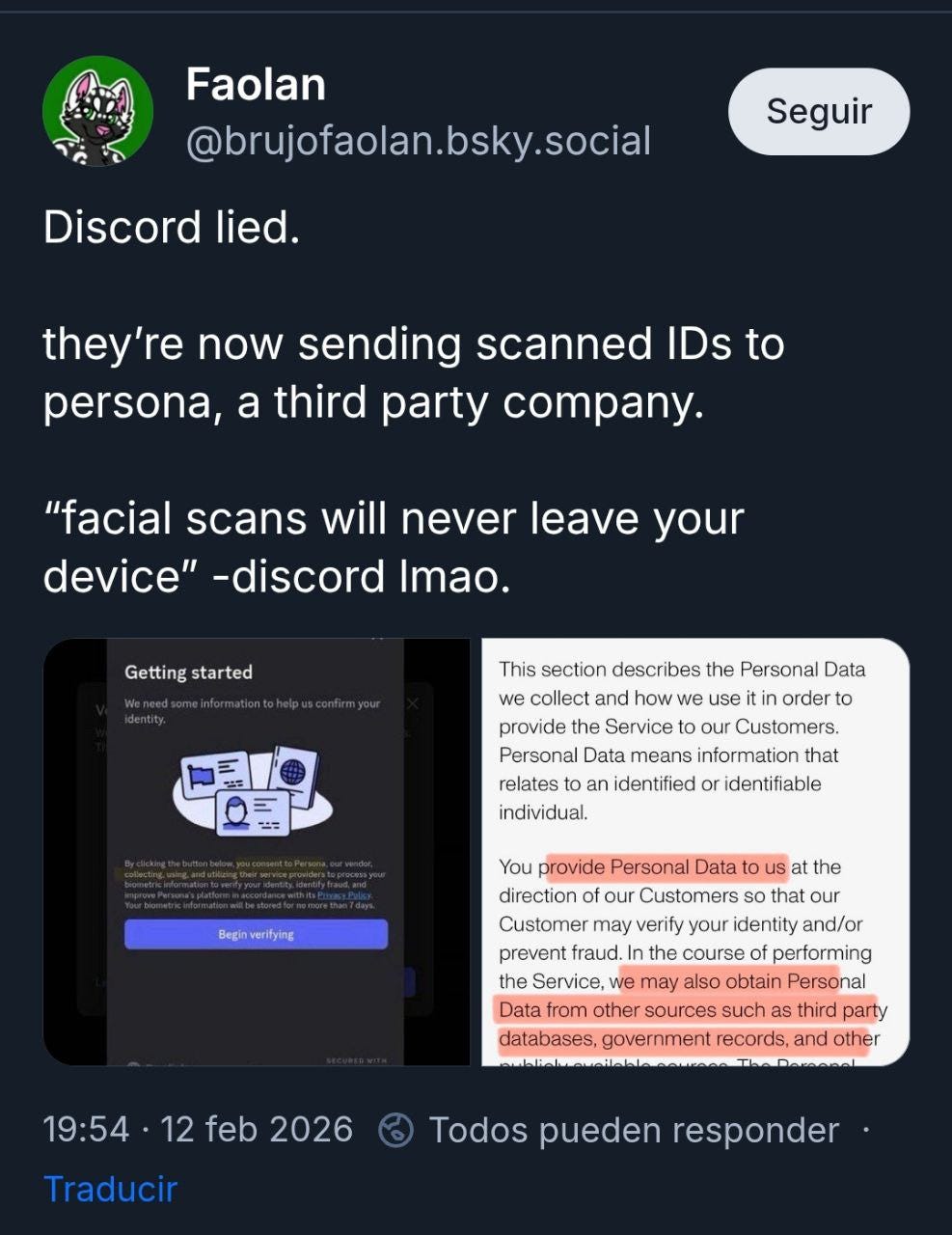

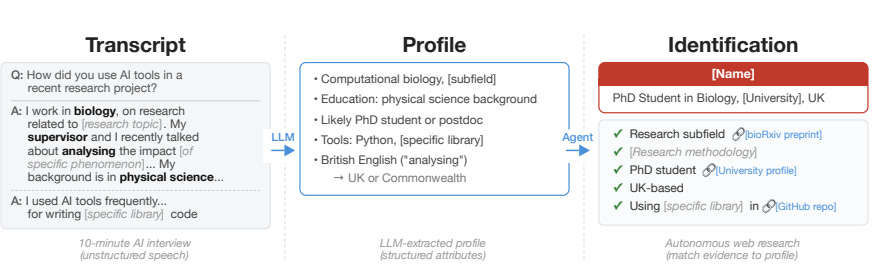

.- From the same Natzir and the same interesting LinkedIn post also comes the paper by researchers from ETH Zurich, MATS, and someone from Anthropic about how LLMs may allow massive and automated deanonymization.

Besides containing the prompts used, what they achieved is a bit scary in terms of reliability:

Hacker News, connected with LinkedIn: the agent correctly identified 226 of the 338 targets (67%) with 90% precision (95% CI: 86–93%; 5 incorrect identifications, 86 abstentions).

On Reddit: accuracy of 25–52% with precision of 72–90%.

On transcripts of Anthropic interviews: it identified 9 of the 33 scientists with 82% precision (with 2 errors, 22 refusals). The screenshot is precisely this case with the operational flow.

📎Useful tools

.- We declare ourselves absolute fans of Tey Bannerman. And Tey, friends, has done it again. It’s not easy at all to simplify things this complex with this level of detail. I recommend dedicating a Saturday morning to soaking deeply into this framework which will help you a lot, a lot to use AI better.

.- It’s not a direct-use tool like those usually placed in this section, but someone has created a comparison tool for small public contracts. A citizen initiative by a computer engineer to surface red flags in many low-value contracts. Link to the website to visualize metrics and data: open contracting. Link to the X thread with the author’s explanation.

🙄 Da- Tadum Bass!

There won’t be any sign, but they’ve nailed the point of making sure you know you’re being watched.

If you think this newsletter might appeal to and even be useful to someone, forward it to them.

If you miss any doc, comment, or dumb thing that clearly should have been in this week’s Zero Party Data, write to us or leave a comment and we’ll consider it for the next one.