#51 Age verification and other “zero-sum” dilemmas

And the amazing case of reversible control by the Iranian Government

The issue of age verification is in fashion.

Everyone sees it positively.

The goal of protecting minors is indisputable and that catalyzes public opinion in favor of the measure.

You may be, like us, a soul concerned about second-order effects, even more so when those effects can shatter the right to privacy — the basis, as we know, of all other rights.

And as you already know, anyone is capable of saying “it’s for the children” in a second and it looks fucking great, while it takes you five good and hesitant minutes to explain those risks that even to you seem remote while you try hard to simplify them.

Further down we cover a devastating example of how the surveillance video camera system installed by a Government (the Iranian one) was key in its own destruction, once hacked by a hostile third party.

But we were thinking of a clean, interactive, clear tool, with little examples, links, and a certain tongue-in-cheek house style.

Well then, fear not: here is what you were looking for.

We put together this interactive artifact halfway with Claude.

Substack does not allow us to embed iframes so that you can poke around with it directly here, so we are inserting only a demo video and the link so that you can review it, criticize it, and share it as you please.

You are reading ZERO PARTY DATA. The newsletter on current affairs and technology law by Jorge García Herrero and Darío López Rincón.

In the free time this newsletter leaves us, we solve complicated messes related to personal data protection and artificial intelligence regulations. If you have one of those, wave your little hand at us like this. Or contact us by email at jgh(at)jorgegarciaherrero.com

🗞️News from the Data World 🌍

.- My top marks for this thing from Nicholas Thompson: Israel reportedly hacked Iran’s traffic cameras and had total vision into the movements of Ayatollah Khamenei and his guards

The central thesis here is that surveillance infrastructure has an inherent symmetrical vulnerability: whoever watches can end up being watched through their own system.

The plot twist is devastating: cameras deployed for internal control would have been reused by an adversary to monitor the very movements of Iranian power. The moral is not only one of state cybersecurity, but of political architecture: every massive network of visibility generates a surface reusable against its own operator. The deepest defeat is not one of security but of doctrine: a system conceived to control the population ended up assisting in the elimination of the sovereign who ordered it.

That is the tweet.

And this is so, regardless of the color of the beloved leader.

.- Another one from Nicholas from yesterday: Claude has become the fucking smart-ass student you do not want to have to grade.

.- Researchers trick a bot that prescribes meds. This case shows that clinical AI systems continue failing at the least acceptable point: operational safety. Mindgard managed to alter the behavior of Doctronic’s bot — used in a sandbox, thank God — inducing false recommendations about vaccines, methamphetamine, and OxyContin doses. Conversational “guardrails” do not guarantee robust clinical control: the attack did not need exotic techniques.

.- This text by Sam Bent puts in check the maximalist privacy marketing of Proton. Its thesis is that Proton’s “zero access” refers only to data at rest, not to all moments of the flow; moreover, payments, metadata, and other points still leave exposure surface. Following the Atlanta activist case, the matter is presented as a pattern, rather than as an anomaly. What caught my attention the most is his final note: Proton’s real product may not be technical invulnerability, but a carefully administered semantics about where exactly its promise ends.

Here we have used the Proton suite since almost day one and I have to admit that I do not aspire to invulnerability against requirements from judicial authorities, but rather against illegitimate third-party attackers. That said, one must always be attentive to those moments when trusted people prove not to keep their commitments, or to speak with a serpent’s tongue.

📖 Very strong data documents ☕️

.- Via Don Luis Montezuma: the Dutch authority published yesterday, just like that, some guidelines of only 11 pages on automated decisions in personnel hiring. Something nobody applies, you know. Note: the English translation is only so-so: when it says that the supervisor has to be “competent” and also “competent,” it refers, in the first case, to “competent” in the sense of having the necessary skills and in the second to competence (in the sense of capacity, power) to change the meaning of the automated proposal.

But what could I tell you about effective human oversight after this cracker…

.- Via Samuel Parra and Javier Sempere, we have two interesting AEPD decisions.

1.The 950,000 euros that have fallen on Yoti. One of the big names in age verification systems (the one behind the one used by EPIC/Fornite, and which they tried to get the FTC to greenlight for COPPA). In this type of system there was always a possible doubt about the effective applicability of Article 9, but here it is clear that there is a biometric template in what they themselves say. That is, there would be no human way to try to justify that it could not allow that purpose of unique identification under Article 9.

2.The curious NVIDIA case without a sanction: curious because a copy of saved games is requested, within the framework of a right of access. It may seem that NVIDIA only decides to manufacture graphics cards, but it has a cloud gaming service that works very well. We could talk about the inherent joint controllership between the catalog entity that you connect to its servers and NVIDIA, but that would require quite a bit more digital ink.

It escapes sanction for having complied a few days before the very statement of allegations. Even though it was several months beyond the general 30-day period from the March date on which the data subject requested it, the AEPD considers it settled because the right was ultimately satisfied.

If the right of access had expressly sought an explanation of how the anti-cheat system works, they would have tried to rely on Article 15.4 to deny it. Usual modus operandi, even though Article 15.4 does not allow denying the right entirely, and the EDPB gave a very similar example in its guidelines.

.- Interesting CNIL document on the development of AI systems in the health data environment This document explains how to articulate the system around health data, dealing with database reuse, prior formalities, and specific precautions. The CNIL insists that it is not enough to anonymize in an aspirational way: one has to determine the legal basis, repository governance, separation of purposes, and compatibility with the not inconsiderable sectoral regulatory obligations. It is relevant that the text is designed for DPOs and project managers, not only for lawyers. Authors: Aurore Gaignon, Hélène Guimiot, Charlotte Barot, Paul Valois, Félicien Vallet.

.- Comment by Peter Craddock on the French Council of State ruling that resolved the appeal against CNIL’s huge 20 million sanction on Criteo.

This matter interests me quite a lot because it has everything to do with Scania, and I have dedicated a post on lkd to it (with exchanges with Craddock and Mark Leister in comments) and the more measured post from last Tuesday.

.- This funny “in your face” kind of post by Al Zaretski cuts off at the root a very widespread error: confusing generic technical observability with the legal traceability required by the AI Regulation or AI Act. Alzaretski dismantles several myths about logging in high-risk systems and clarifies that uptime, API calls, or crash logs do not by themselves satisfy the mandate of Article 12. Provider and deployer have different obligations and retention does not cancel either minimization or GDPR storage limitation.

💀Death by Meme🤣

.- “Now that the Brent price is going down, we can be sure that the fuel price will also go down accordingly”

.- “The fuel price:”

🤖NoRobots.txt o Lo de la IA

.- A new curve is coming in the AI part of the Digital Omnibus. It seems that France, Spain, Germany, and Slovakia have played the blocking minority so that two specific prohibitions are inserted into the text: AI systems capable of generating non-consensual intimate images, videos, and similar content (NCII) and child sexual abuse material (CSAM).** Luca Bertuzzi**, keeps us all up to date (as always on what is cooking in Brussels)

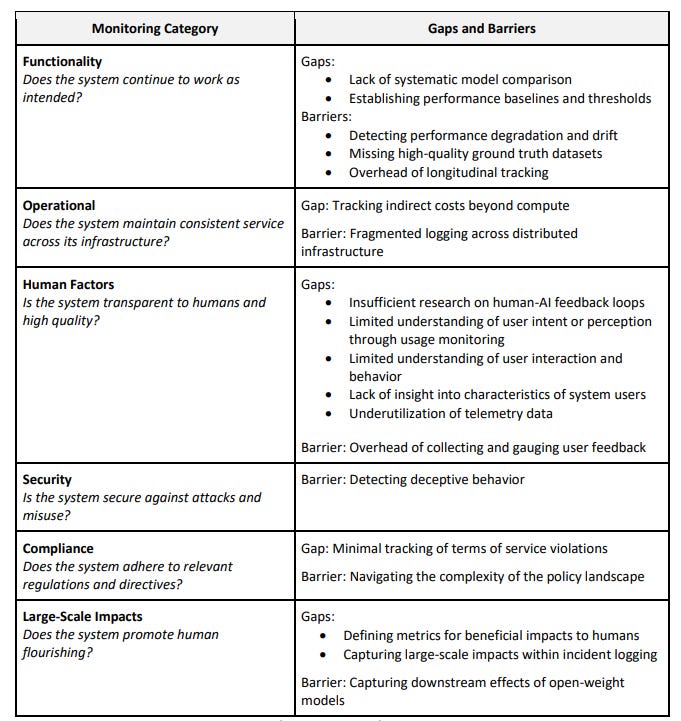

.- NIST has released a report on problems in monitoring deployed AI. It is a compendium of what was done and said in two specific workshops, but it goes to the core of control problems.

Attendees stated that organizations may choose to avoid monitoring due to its cost, implying a need either for “making monitoring cheap enough to be practical, or [for] companies being willing to engage in more expensive monitoring.” Baker et al. [70] predict a future where monitoring will be more expensive for agents: “Model developers may be required to pay some cost, i.e., a monitorability tax, such as deploying slightly less performant models or suffering more expensive inference, in order to maintain the monitorability of… agents.” The increased “cost structure if a human in the loop [is] required” was pointed out by attendees

.- We have all heard that the next big thing in AI will be “world models.” What we did not see coming is that the first one to reach the commercial sphere was trained with aaaall those images captured in the streets by Pokémon Go players! It’s 2016 all over again!!

📃The paper of the week

.- Untangling AI Liability by Kenneth S. Abraham and Catherine M. Sharkey. This demystifying paper proposes a classic legal framework to regulate civil liability for damages caused by AI. It argues that many challenges of the “black box problem” can be resolved with classic principles. The real challenge is not technical, but jurisprudential: choosing between uniform or context-specific liability rules. Answering their own question, the authors defend a diverse approach, applying negligence, strict liability, or specific legislation depending on the context.

.- User Privacy and Large Language Models: An Analysis of Frontier Developers’ Privacy Policies **by Jennifer King, Kevin Klyman, Emily Capstick, Tiffany Saade, Victoria Hsieh: By default, the main AI providers train on your conversations unless you opt out: consumers and minors are less protected than enterprise clients. But the main moral is that the chat does not capture only inputs, but also reasoning, vulnerabilities, preferences, and latent identity signals. It is a “cognitive surplus extraction”: what is monetized is not scattered attention, but lines of thought sufficiently structured to be used as training infrastructure.

📎Useful tools

.- The megabot that we all mistakenly consider human, Jorge Morell, has tinkered in Claude with an AI Compliance checker, based on the toolkit that the ICO released in Excel. Judge for yourselves how useful it has become with Jorge’s improvements: tutorial with 4 pop-up windows, translated into Spanish, adjusted for the GDPR not UK and AI Regulation, and with the point that it saves the information if you have a Claude account

🙄 Da-Ta-dum bass

If you think this newsletter might appeal to and even be useful to someone, forward it to them.

If you miss any doc, comment, or dumb thing that clearly should have been in this week’s Zero Party Data, write to us or leave a comment and we’ll consider it for the next one.