Things are moving this week in Brussels. On Monday, the EDPB held a conference “Cross-regulatory interplay and cooperation in the EU: a data protection perspective” with three panels on three of the major regulations:

Competition vs GDPR: promise of guidelines with the Commission;

DMA vs GDPR: there are guidelines here, but it seems the speakers highlighted that it should be made clearer that the DMA does not take precedence over the GDPR; and

DSA vs GDPR: there are also guidelines here, but the discussion turned to the big topic of age verification and that both must be applied: “The panel concluded with a clear message: online safety under the DSA and the lawful processing of personal data are two sides of the same coin, both calling for the DSA and the GDPR to be read coherently. “

And today, the simplification of the Digital Omnibus in the AI section should be voted in plenary. And then on to the usual trilogues.

Tomorrow we will participate via Webex in the EDPB public hearing for its EDPB Guidelines on the processing of personal data to target or deliver political advertisements.

You are reading ZERO PARTY DATA. The newsletter on current affairs and technology law by Jorge García Herrero and Darío López Rincón.

In the free time this newsletter leaves us, we solve complicated messes related to personal data protection and artificial intelligence regulations. If you have one of those, wave your little hand at us like this. Or contact us by email at jgh(at)jorgegarciaherrero.com

🗞️News from the Data World 🌍

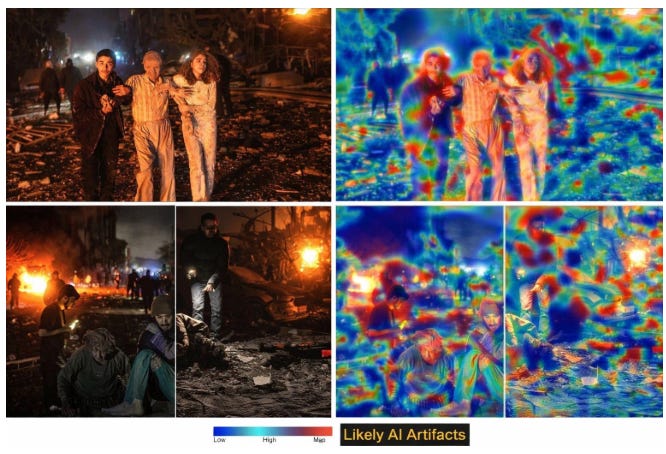

.- In an upcoming newsletter we will analyze the transparency obligations imposed by the AI Act on synthetic image, video and audio content (not necessarily deepfakes). One striking novelty is the combination of marking—so machines can identify it—and labeling—for humans. These marks must make the work easier for fact-checkers, journalists, and academics.

What this bald guy didn’t see coming were fake fact-checking systems to question the truthfulness of real images like those that have appeared amid the flood of AI slop related to the USA-Israel-Iran war.

In an unprecedented plot twist, these “fake verification outlets” erode trust in real evidence, and fabricated analyses destroy trust in verification itself.

.- How many times has Elon Musk said he was going to pay someone something and then didn’t do it? For the richest man in the world, quite a lot. Better not draw conclusions.

.- Latest months of OpenAI: (i) it announced advertising within the chat; (ii) then a version of ChatGPT for adults. (iii) this very week it offers bonds with more than 17% “guaranteed” interest. (iv) Just yesterday it announced the disappearance of Sora, its much-hyped synthetic video platform/social network.

I’m not an economist, but for some reason this beautiful video comes to mind.

📄High density docs for data junkies☕️

.- Reading the AEPD and CNMC post on Article 28 of the DSA (the one that supports age verification as a legal obligation), two things can be recalled:

That with the €950,000 fine to Yoti by the AEPD (€500K just for the Article 9 issue), the double approach the Commission included in its guidelines on the matter has aged poorly: “Age estimation methods can complement age verification technologies and can be used in addition to the former, or as temporary alternative in particular in cases where verification measures that respect the criteria of effectiveness of age assurance solutions.”

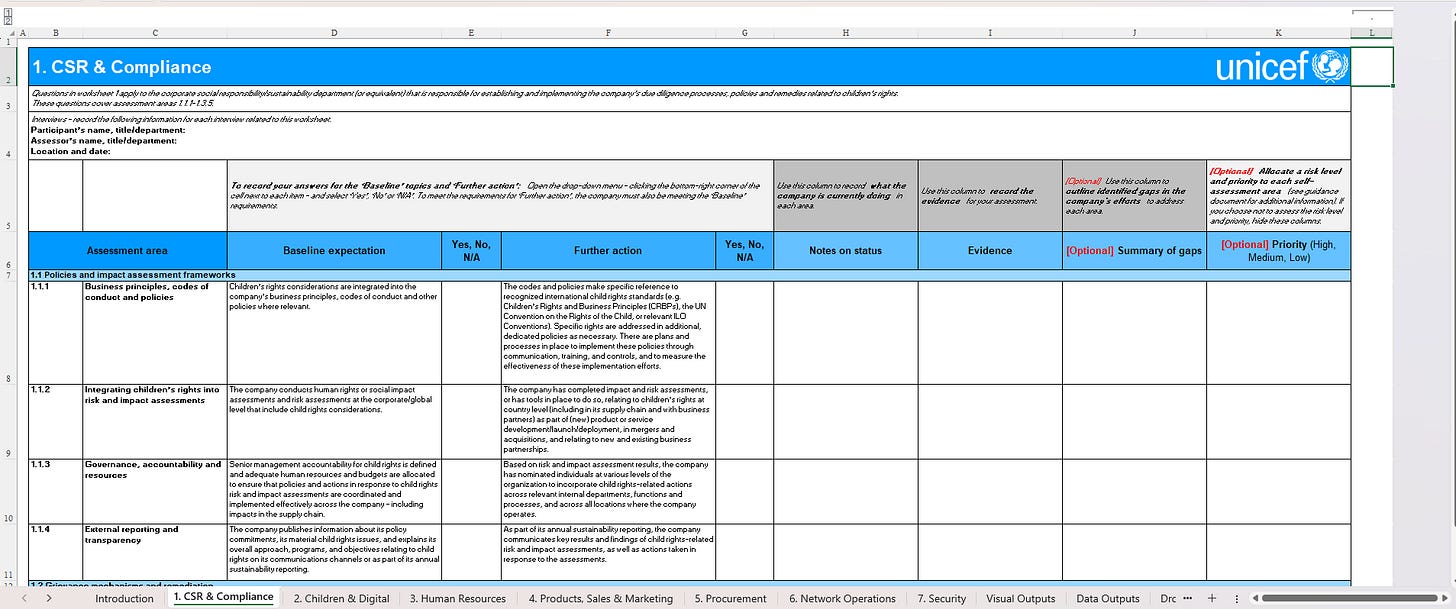

That the Commission itself mentioned a Child Rights Impact Assessment (CRIA). Which one exactly? The one UNICEF allows you to download from its website. Yes, to the LIA, TIA or FRIA this “CRIA” is added.

.- A good summary post by Vadym Honcharenko with the latest contributions to the debate on how that fluid and volatile—but ultimately critical—concept of personal data stands. The post compiles readings following the initial debate of September 25, with analyses by Peter Craddock, Sophie Stalla-Bourdillon on lessons from the SRB case, and Phil Lee on the risks of building data protection on shifting sands. Our humble contribution here.

.- Do you remember that the GDPR does not prohibit applying data to different processing, but rather processing incompatible with those originally legitimized? The ICO updates its guidance detailing when and how controllers can reuse personal information for a purpose different from the original under the UK GDPR as reformed by the DUAA 2025. A clearer compatibility test is introduced and reuses that the UK GDPR now considers automatically compatible are listed (in a new Annex 2).

It’s true, on this topic the UK has diverged too much from the GDPR. For a concrete and close analysis of the compatibility of secondary purposes under the GDPR, the best place to start is the Digi case of the CJEU that we covered years ago.

.- Via Carlo Piltz: The Bavarian authority requires a separate and private communication channel between data subjects and the DPO from the organization’s common support channel. Watch out

💀Death by Meme🤣

🤖NoRobots.txt or The AI Stuff

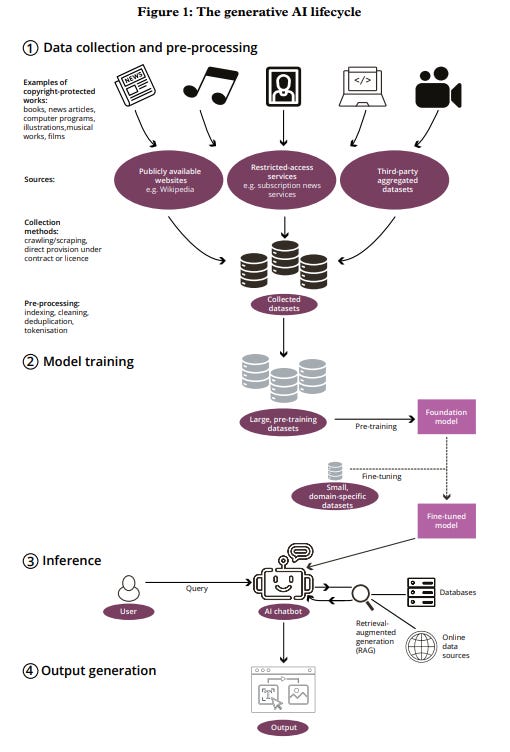

.- Document from a UK House of Lords committee (our senate, but with pedigree) on generative AI: “AI, copyright and the creative industries”. It’s from February, but no less interesting for that.

It focuses on putting forward considerations and recommendations to the Government on how to approach it without wiping out intellectual property and the “creative industries.”

-Better to leave out exceptions for Text and Data Mining (TDM), i.e., for the use of sources protected by intellectual property, under the mere opt-out guarantee they would have set up to notify the data-hungry AI that it cannot use it. What has been seen in the EU does not provide much reassurance regarding guarantees.

175. A broad TDM exception with an opt-out rights-reservation mechanism, as envisaged under “option 3” of the Government’s consultation, is not a viable foundation for the UK’s AI and copyright regime. The tools currently available for rights reservation are fragmented, poorly understood and place an unreasonable burden on individual creators.

176. Experience in the EU suggests that comparable provisions have neither delivered reliable control for rightsholders nor a robust licensing market. We welcome the Secretary of State’s acknowledgement that there is currently no workable opt-out proposal on the table and consider that the Government should now draw a clear line under this approach. The UK’s starting point should remain a licensing-first model in which permission is required to use copyright-protected works for AI training.

And that if companies are asking for a TDM exception, it’s because they know what they’re doing is not legal.

36. (…..). However, the consistent call from technology sector stakeholders for a new, broad commercial text and data mining (TDM) exception expressly to enable more AI model training to take place in the UK suggests that they do not regard large-scale commercial training on copyrightprotected works as clearly covered by the existing exceptions. If they did, a commercial TDM exception would be unnecessary.

-Not to modify intellectual property law to fit AI, and that this “learning” is “reproduction”: they call it learning, it is reproduction by pure intellectual property standards:

38. (….)We do not accept the view that the copying and processing of protected works during training should be characterised as ‘learning’.

39. We therefore consider that the Government should rule out any reform of the Copyright, Designs and Patents Act that would remove the incentive to license copyrighted works for AI training, and should instead focus on strengthening licensing, transparency and enforcement within the existing framework.

“We do not accept the view that the copying and processing of protected works during training should be characterised as ‘learning’.”

-The gap in protection of style, aka not asking AI to generate something as if it had been made by the author. Although the line is thin so they couldn’t be easily sued.

83. The absence in UK law of a robust personality right or specific protection for digital likeness means that creators and performers lack an adequate basis to challenge harmful outputs that imitate their distinctive style, voice or persona without reproducing a specific underlying work.

84. The Government should introduce protections against unauthorised digital replicas and ‘in the style of’ uses. Any new framework should give creators and performers clear, enforceable control over the commercial exploitation of their identity, while appropriately safeguarding freedom of expression and other legitimate uses.

-A nice infographic of the generative AI flow:

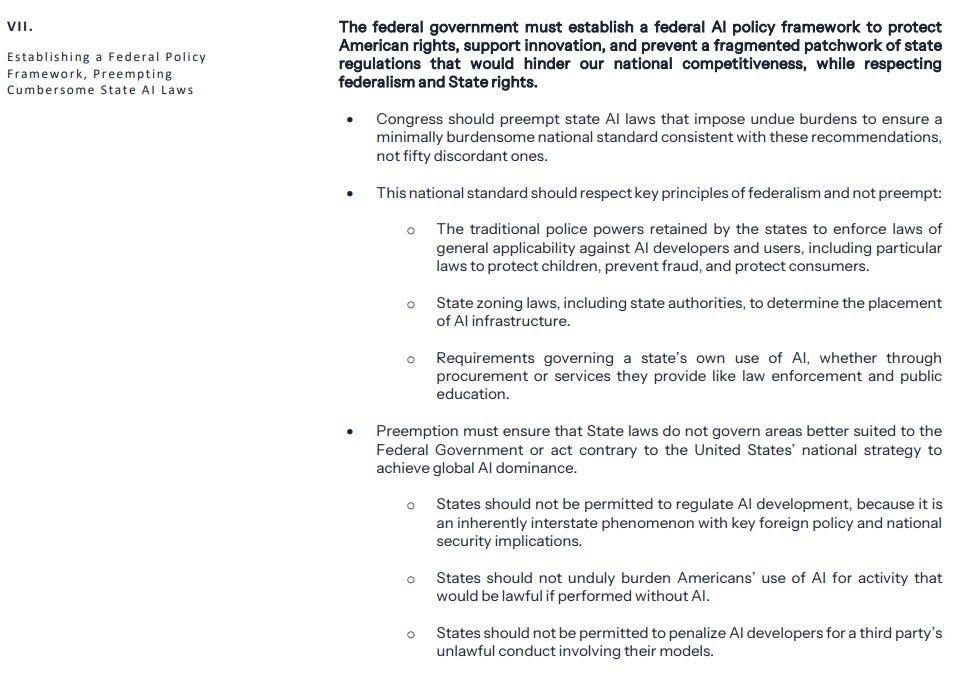

.- The regulatory framework on AI released by the White House might not seem to contain anything unusual (beyond the fact that it says “Trump” and that the protection of minors almost suggests it’s Melania’s idea). The problem is that Trump doesn’t do anything without intent. Most of the document contains sensible proposals for Congress: age verification and platform oversight, ensuring citizens don’t bear the increased electricity consumption of mega AI industry, anticipating research and appropriate measures for identity theft and fraud, not legislating anything that removes judicial authority to decide on IP infringement; but at the end it drops the bomb. The subtle way of waging war against Anthropic and anyone who refuses strict AI control:“act contrary to the United States’ national strategy to achieve global AI dominance.”

📃The paper of the week

.- This paper by Cristiana Santos, Sanju Ahuja, Johanna Gunawan, Nataliia Bielova proposes an analytical framework to assess dark patterns under the Digital Services Act, identifying obstacles to specific user rights: making complaints harder (Article 20), obscuring advertising transparency (Article 26), manipulating notice-and-action mechanisms (Article 16), distorting user choice regarding recommender systems (Articles 27 and 38), and interfering with minors’ rights (Article 28).

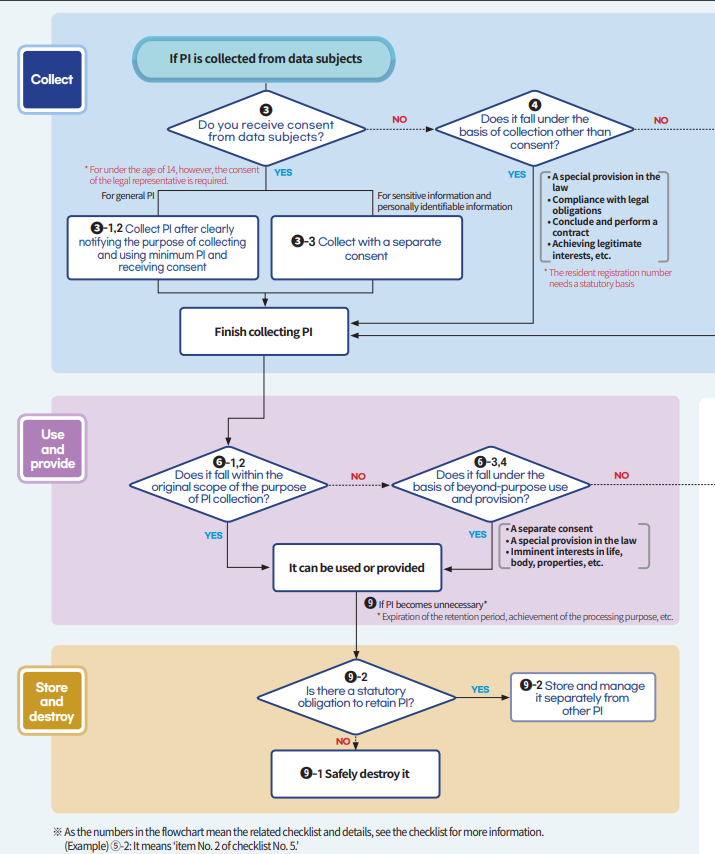

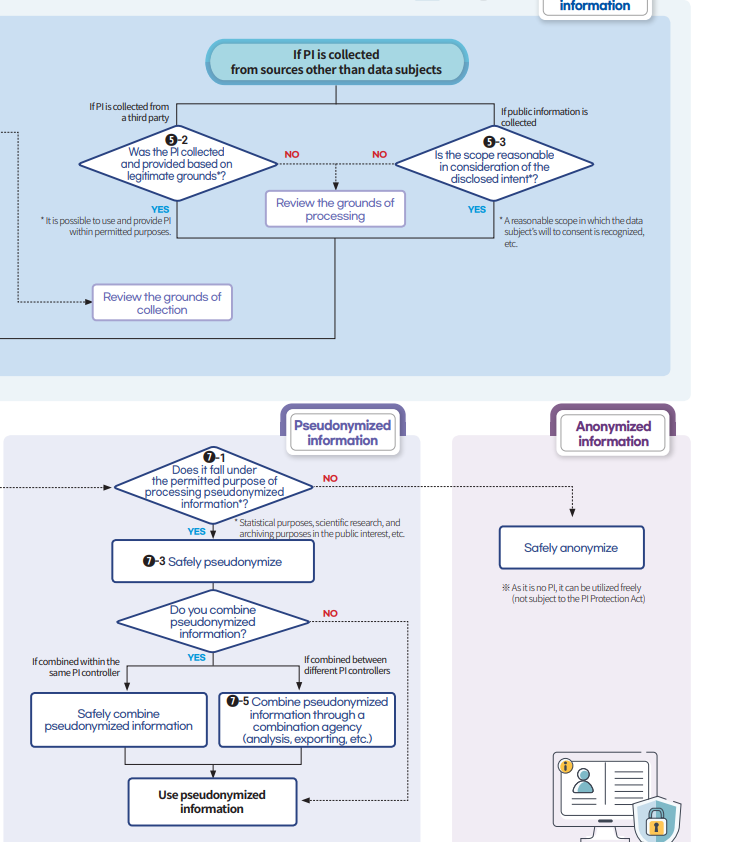

.- It’s not exactly a paper, but it stays here to avoid overloading the AI section. The Korean data protection authority released an interesting checklist in 2021: Artificial Intelligence (AI) Personal Information Protection Self-Checklist. Although time has passed, it’s still worth a look. Seen via San Luis Montezuma.

The checklist itself for core AI cases;

Very clear data processing flows:

🙄 Da- Tadum Bass!

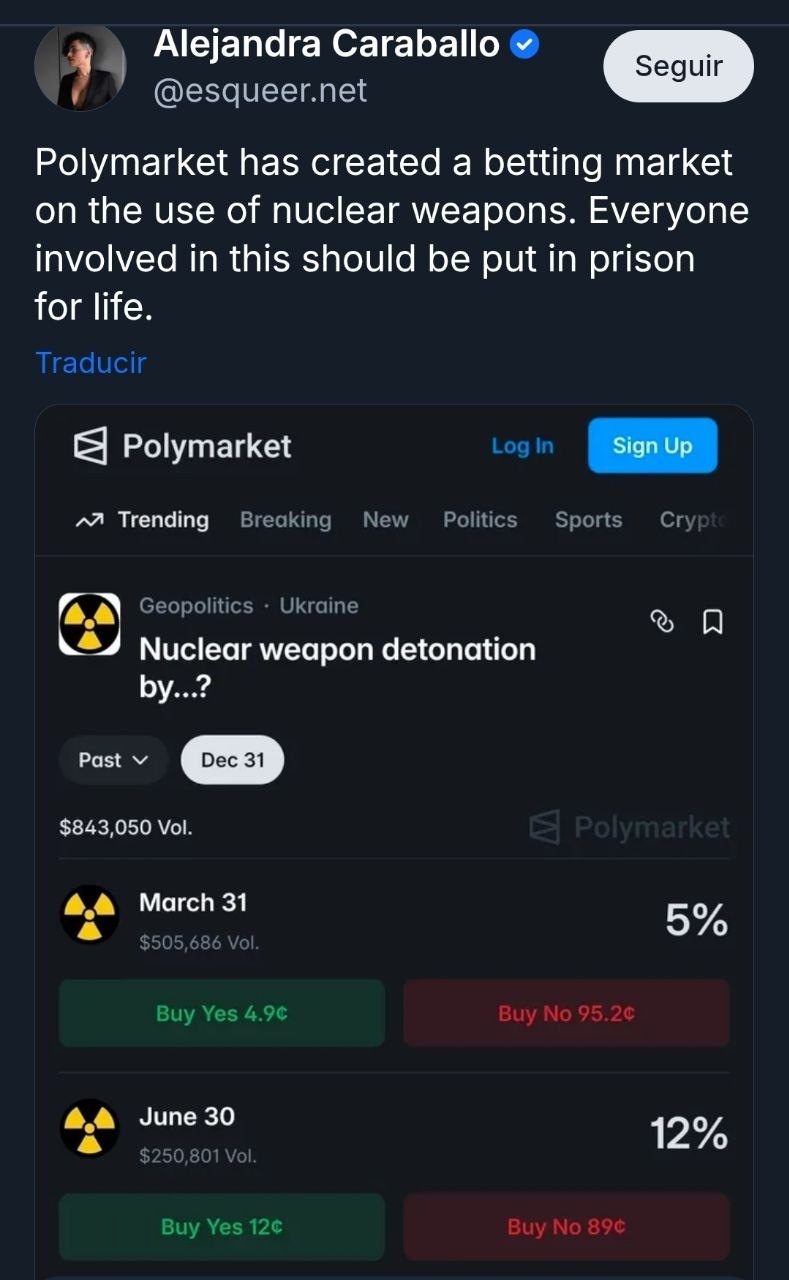

Atompunk is the genre or aesthetic style that tries to reflect a post-nuclear Cold War dystopia.

The key point is that it would be preferable for it to remain fiction to liven up works like Fallout, Stalker or Metro.

If you think this newsletter might appeal to and even be useful to someone, forward it to them.

If you miss any doc, comment, or dumb thing that clearly should have been in this week’s Zero Party Data, write to us or leave a comment and we’ll consider it for the next one.