Starting from this screenshot of Aurelie Pols’ apt comment that a good data clean room would make sense, we felt like taking a little look at the campaign to start this newsletter in a different way. A snapshot of the current times.

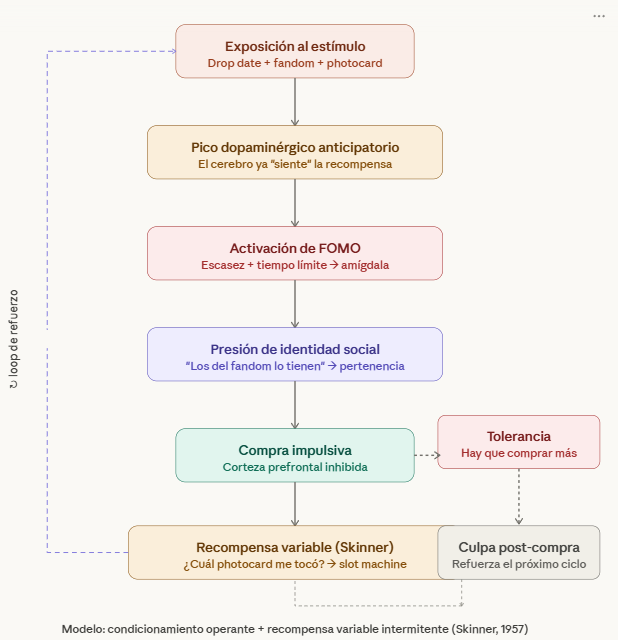

With everything that is currently coming down the line in terms of future regulation, court cases where internal reports keep coming out showing the use of every trick of applied psychology that causes real harm to minors and adults alike, and authorities becoming ever more aware of what addictive patterns are, along come the people from the big arched M with an aggressive marketing campaign based on scarcity, FOMO, and data collection:

Taking advantage of it to get good data through the app. A classic among classics;

Taking advantage of the current obsession with opening Pokémon packs (physical and virtual): not for nothing, it is already known how much the rarest random cards you might get here would be worth. Don’t ask about the obscenities people ask and pay for Pokémon cards (especially if they are “graded”); and

Taking advantage of Gen Z’s k-pop obsession to hit them with every visual stimulus and a full-blown Skinner scheme to keep them buying until they get everything.

And all this, only to then see the company’s CEO pretending to bite the wonderful not-at-all-bad hamburger they sell. P.S. Krusty already did it in The Simpsons.

Eou are reading ZERO PARTY DATA. The newsletter on current affairs and technology law by Jorge García Herrero and Darío López Rincón.

In the free time this newsletter leaves us, we solve complicated messes related to personal data protection and artificial intelligence regulations. If you have one of those, give us a little wave. Or contact us by email at jgh(at)jorgegarciaherrero.com

🗞️News from the Data World 🌍

.- The latest in the Gallagher-style AI feuds: while OpenAI shuts down Sora and gives up on its “huge project” for an adult NSFW ChatGPT, Anthropic accidentally leaks all-the-co-de of Claude (!!!!). But the very latest news is that Anthropic is doing a limited release of Mithos, its latest model, given how easily it finds zero-day vulnerabilities in “any software.”

And it’s only Thursday, Captain Haddock.

.- Noyb informs us that the Council of State dismissed Criteo’s appeal in March over the famous multimillion fine they got for doing their Adtech thing badly. It seems that the line about pseudonymous identifiers associated with IP addresses and “other browsing data” not being personal data at this point (with IP being one of the classic examples) did not sit well at all with the French Council of State. When we all already know they cross-reference a thousand things, and that what matters to them is being able to single you out through that IP and browsing, with no interest whatsoever in your name and surname.

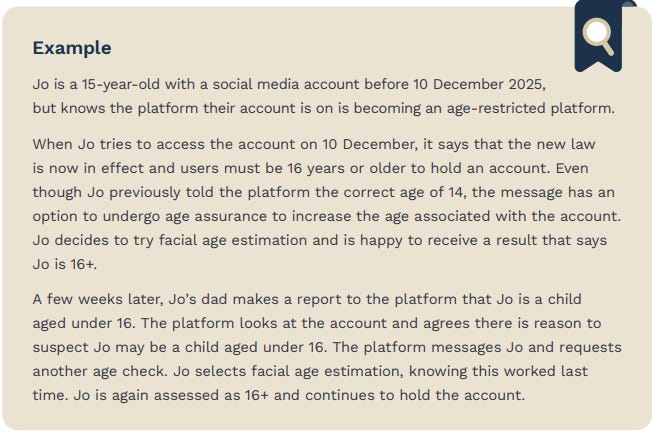

.- The Australians are finding out that the new regulation on minimum age for social networks does not prevent nature from finding a way through: Social Media Minimum Age: March 2026 Compliance update. The supposed guarantee intended to minimize the margin of error / practical application of the right to challenge the decision of the system that categorizes you as a minor or an adult has been turned on its head by the reality of the world. On paper it is framed as a wonderful safeguard, but the minor sees it as just try again until it beeps. We saw Peter Craddok’s comment on the document on LinkedIn, but we went to the document to look for those specific highlighted observations:

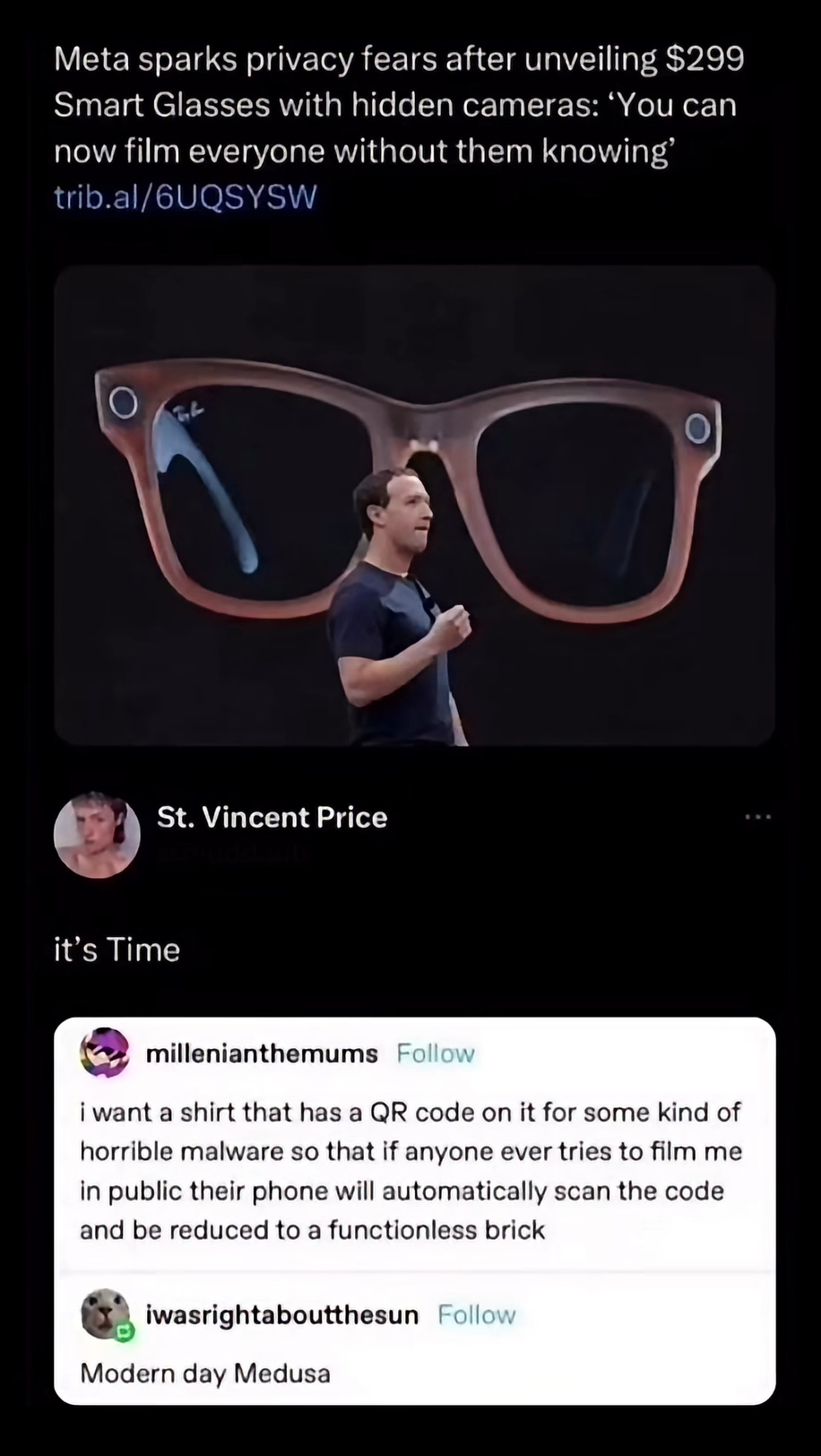

.- This article by John Wilkins portrays the expansion of surveillance devices that are voluntarily made visible, almost totemic, presented as preventive crime deterrence. The moral is that surveillance is no longer hidden, but rather displayed as a message. Umberto Eco would praise its semiotic function: these artifacts are designed to generate “anticipatory obedience.”

.- Meta bad, but losing Section 230 worse. This fine text by Ryan Broderick contrasts two poisons** for you to choose yours**: Meta’s moderation and design failures versus the systemic risk of weakening the Yankee liability shield of Section 230. The counterintuitive argument is that punishing platforms may end up damaging the information and expression ecosystem more than correcting specific abuses. But friends! the author is not defending Meta, no (unlike all those former presidents of enforcement authorities who run to big law firms to do so as soon as they leave office); what he is doing is defending the legal architecture that prevents an internet dominated only by actors able to afford litigation and massive preventive filtering.

.- An interesting article from a foreign outlet explaining LaLiga’s very well-known abuse in blocking the websites of all and sundry during football match broadcasts. What is interesting is that it describes the ecosystem in which the companies holding broadcasting rights operate as that of a true banana republic.

📖 High density docs for data junkies ☕️

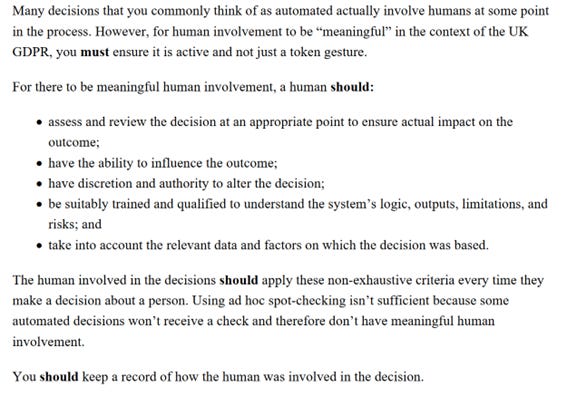

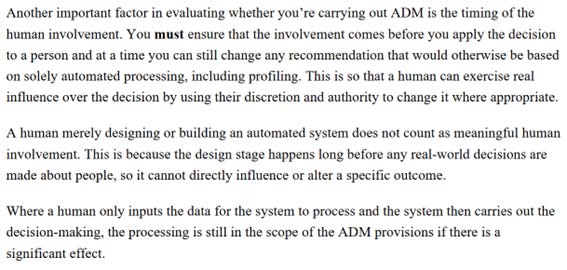

.- The ICO put out for public consultation its guide on automated decisions. You have to keep in mind that it is based on the UK GDPR, but it does leave some very applicable things:

Real human intervention, not tokenistic, recorded and prior enough that there is time to make critical changes in the processing.

And the problem of consent when it comes indirectly. It calls it “invisible processing,” because the data subject does not even realize what is being done.

“You should remember profiling can often be invisible to people. For example, if it involves personal information that you obtain from somewhere other than directly from the person themselves. This sort of invisible processing may mean that it is challenging for you to show that you have valid consent because it isn’t informed or specific in these cases.People also have the right to withdraw their consent at any time. You must make it as easy for them to withdraw consent as it was to give it.”

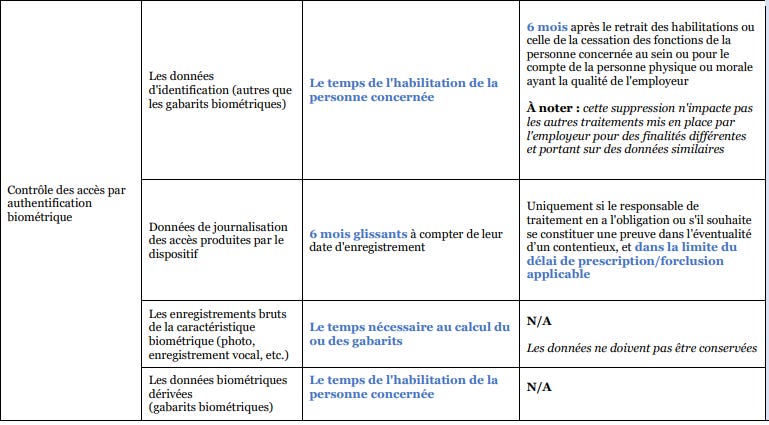

.- The CNIL publishes a document on retention periods in the employment sphere. Very table-format, and without many surprises. What they call an intermediate archiving period is, in the end, the same as our specialty under article 32 of the LOPDgdd on blocking. They include details on biometric access, and even a breakdown of the staff itself.

.- The Spanish Supreme Court applies the Scania doctrine. The other way around, but it applies it. And along the way it says that all those guides and guidelines are worth what they are worth: as “notorious facts,” not as a source of law.

.- Interesting judgment that punishes a detective agency for capturing the image of a worker on sick leave on the balcony of her house. She was on the balcony, yes, but she was in her house. So be careful.

.- Does National Security Scheme certification serve as a mitigating factor in liability for non-compliance with the GDPR? Francisco Javier Sempere and the National Court have the answer.

.- The Most Holy Luis Montezuma brings us, in a single skeet, links to the versions in** English **of the AI laws or bills of Japan, Korea, Vietnam, and Taiwan.

.- Highly recommended are the in-depth articles on ADM and AI Act aspects from the FPF. Specifically this one on the prohibition of emotion recognition by Desara Dushi.

.- When the AEPD bars the door, biometrics come in through the window. No, this is not a faithful summary of Patricia Chenlo’s post, but never let the truth ruin a good headline: Patricia comments on the Draft Law on the protection and resilience of critical entities that enables biometric authentication and identification for access in critical entities, under legal obligation, public interest, mandatory DPIA, and prohibition of labor or timekeeping uses.

.- Don’t miss the hair-raising statement by the AEPD in this decision declaring proceedings expired over a SEUR security breach. The credit for extracting the grain from the chaff goes entirely to Maria Luisa González Tapia.

.- Capilano University conducted this PIA on a proctoring application. So far, nothing new. What is funny about this PIA is that… it found insufficiently mitigated risks and rejected the processing.

.- A delicacy: a coloring book in the literal sense of the term, explaining to children what a data center is. What on earth is this resource doing in this newsletter and in this section? Click the link and then you tell me: not even I, the spy, could have managed to find material so breathtakingly mordant. The authors of this marvel are Melissa Tamberg, Mike DeCamp, Laura Scheving, and Luci Gardner.

.- The crisis of consent in contexts involving AI agents. This post does not stand out for its technical depth in legal issues (which it does not claim to have), but for its ability to illustrate the inherent difficulties in the new contracting and operating flows of aaall those agents (in that, it has plenty). Via the great Joan Sardá.

💀Death by Meme🤣

🤖NoRobots.txt or The AI Stuff

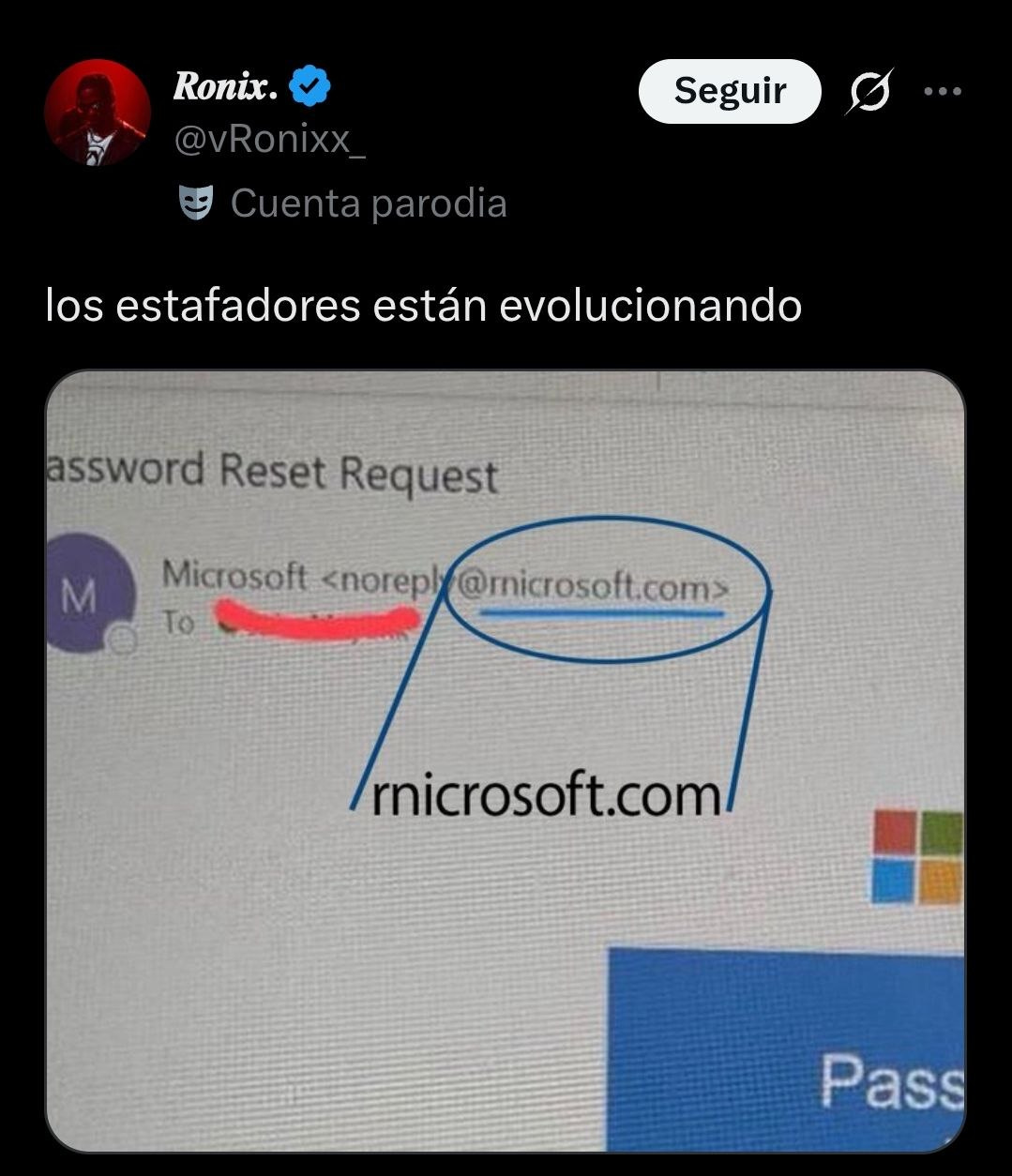

.- I highly recommend reading the description of this scam targeting an Axiom developer that allowed attackers (who pretended to be a startup in order to get a Teams call with their victim) to take control of his access credentials and introduce malicious software among the company’s legitimate libraries. Fine stuff.

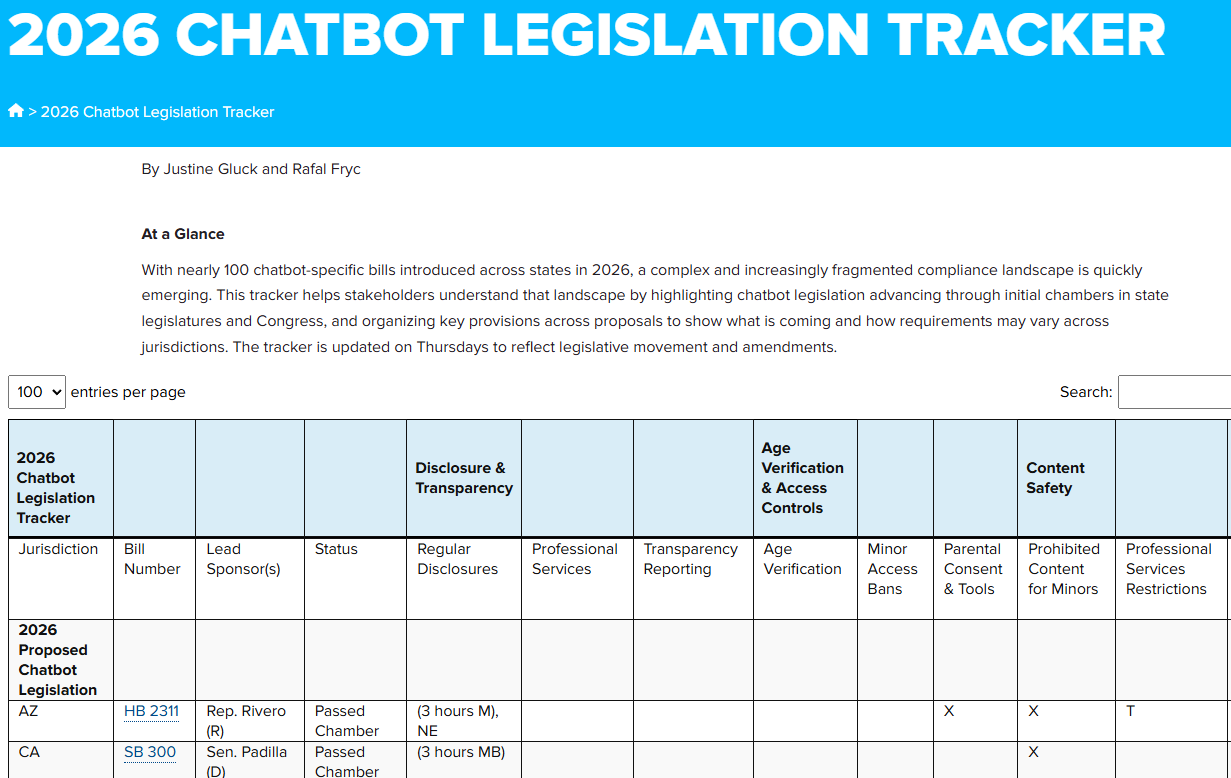

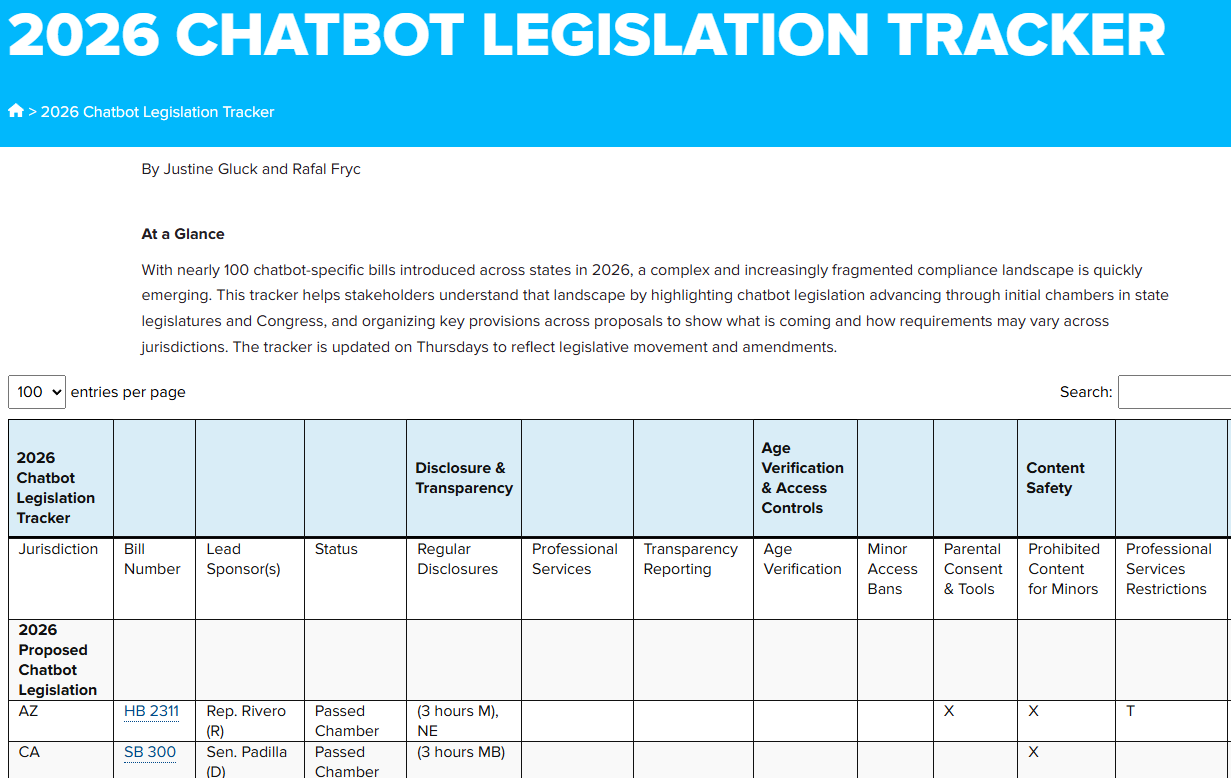

.- US states’ regulations always feel very far away from us, but compilations and visualization tools in the IAPP style keep coming out. Now a tracker has been added on the emerging chatbot regulations (surely also agent regulations) of the states working on them, via FPF (Future of Privacy Forum).

📃The paper of the week

.- It is not a paper but another reflection on the erosion that the use of AI produces in the cognitive capacities of those who are in the process of training and who, inevitably, use and abuse AI models. Brilliant.

.- This one really is a paper. A veeery suggestive paper: “Large language models (LLMs) sometimes appear to exhibit emotional reactions.(…) Our key finding is that these representations causally influence the LLM’s outputs, including Claude’s preferences and its rate of exhibiting misaligned behaviors such as reward hacking, blackmail, and sycophancy.” (Well, would you look at that!).

📎Useful tools

.- US states’ regulations always feel very far away from us, but compilations and visualization tools in the IAPP style keep coming out. Now a tracker has been added on the emerging Chatbot regulations (surely also agent regulations) of the states working on them, via FPF (Future of Privacy Forum).

.- Are Claude’s various usage limits starting to eat your afternoon snack? We have a few little tips for you.

.- An AI-vibecoded document anonymization tool. The most promising-looking one among all those roaming around, IMHO.

.- If — like me — you are a not-so-neophyte in Python and have already spent years creating and forgetting virtualized environments here and there, it is more than easy that this tool to locate and delete them comes in — heh — handy.

🙄 Da-Tadum-bass

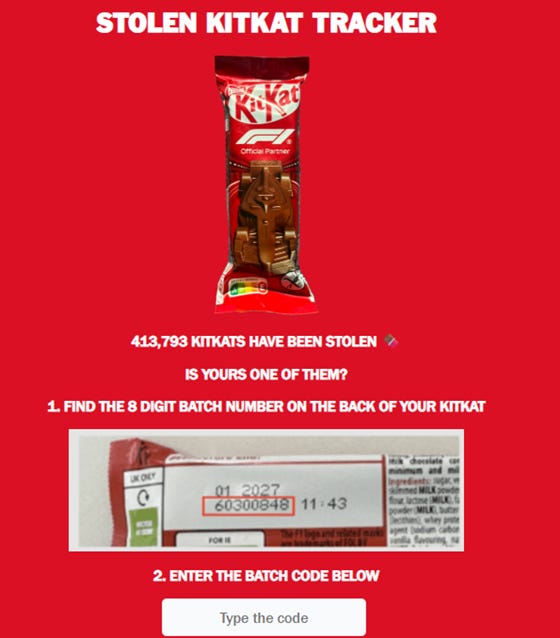

It may seem unrelated. For anyone who has not heard, a Nestlé truck (and its cargo of 400,000 F1-style bars) was stolen on a route between Italy and Poland. Leaving aside that the driver has to be in on it for them not to have geolocated the truck which, surely, was carrying a beacon, Nestlé came up with launching this landing page seeking citizen collaboration. If you had chocolate with the affected code, it was supposedly giving you instructions to notify the company and the authorities they are collaborating with.

The point was to see how that “get in touch” had been set up from a data protection perspective. The problem is that the affected code has not been leaked to see what appears afterwards, the media have not looked into it that far, Reddit has not lived up to expectations here, and neither Claude nor his friends are able to provide the code (it is not the one in the image, no).

Unless it is someone who needed to have a lifetime supply of KitKat, it is quite likely they will not try to sell it or place it on the market with the original wrapper. There are thieves who are rigorous in their work.

If you think this newsletter might appeal to someone and even be useful to them, forward it to them.