#59 Five Current Limitations of Legal AI

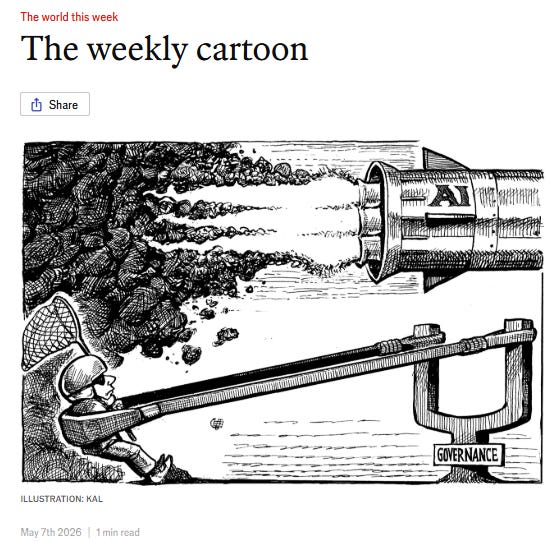

AI is still a tool of varying usefulness

Yesterday Anthropic launched a truckload of legal plugins and everyone is discussing whether this is checkmate for Legora and Harvey and for all lawyers.

Today I want to talk about the structural disadvantages of the most advanced AI versus the dumbest lawyer. Don’t save this post: it’s going to age as badly as the movie Tron.

Surely, SURELY, in a few years we’ll laugh about this perched atop technology that will have solved most of these problems.

I only aspire for the text to be understandable and interesting today.

I have a personal obsession with building a personal assistant based on AI without depending on commercial AIs and that runs locally, on a consumer laptop and trained on my own little legal granary of judgments, papers, and rulings.

I’ve managed to build a tool that I use daily and that is enormously useful to me, but it’s imperfect. I spend quite a lot of time on it, but my aspiration is not to bankrupt Claude or Harvey.

The attraction is how much I learn in every iteration about what works and what doesn’t in each piece of this Meccano set: reranker, citation validation, RRF weights, having a golden standard, using HyDE, BM25.

That’s why, when I saw the German post by @Peter Hense on LinkedIn mentioning some of these concepts, I dove headfirst into seeing what it meant. Peter —with a lot of irony and a very critical view of the lazy use of AI— proposes these questions, I imagine for the bar exam in his country.

Here I translate the questions and answers into human-non-legalese language, because they seemed interesting to me for lawyers, for public officials interested in the use of AI, and for anyone who, like me, is tinkering with information retrieval systems based on more or less sophisticated RAG.

1. QUESTION Discuss the thesis: “RAG systems do not have an inherent model of time.” What consequences does this have for legal research, especially in relation to versions of rules ratione temporis?

Answer: AI does not understand the idea that “later law repeals earlier law”

The AI systems currently used for legal research —the so-called RAG systems, Retrieval-Augmented Generation— work, in very simple terms, like this: you have a huge library of digitized legal documents; when you make a query, the system searches for the semantically “most similar” fragments to your question and delivers them to an LLM so it can build an answer for you.

The good thing is that this overwhelmingly surpasses the keyword searches you could do until now in your files. It will return not the docs containing those literal words, but those discussing the issue or concept asked about.

This is a huge advance. And if the search is launched only over documents you control (your collection of judgments, rulings, papers, guidelines, your best work for clients, validated templates, and so on) you minimize the risk of hallucinations.

But the problem is perverse: semantic similarity is not the same as legal validity.

Imagine you ask the system about the sanctioning regime of Article 83 of the GDPR. The system finds several fragments very “similar” to each other: the original 2016 text, a version with a corrected typo, a rejected draft from the European Data Protection Board, and the current version.

For AI, all these documents are almost identical. The system performs probabilistic rather than deterministic retrieval. Retrieval depends on statistical top-k rankings (I explain this below). Therefore, there is never an absolute guarantee that the legally correct version will appear.

For a jurist, only one of them matters, and it depends on the date of the facts being analyzed.

An AI system can give you an answer based on a repealed rule without any problem if you had it in your database.

And, as happens with personal data, if you want to completely remove obsolete docs from your system, you cannot select which embeddings you want to delete: you have to reprocess the entire dataset all over again.

2. QUESTION: What effects on the use of discretion in the issuance of automated administrative acts derive from the fact that top-k retrieval procedures cannot guarantee exhaustiveness in determining relevant exceptional circumstances?

Answer: Top-k retrieval confirms the rule without being aware of the exception.

When the system retrieves documents, it does not retrieve all relevant ones: it retrieves the k statistically most similar to the query.

If k = 10, the system returns the ten fragments that are semantically most similar to what you asked.

Legal exceptions, by definition, are rare, statistically less relevant, and linguistically atypical. They go unnoticed amid the magma of the document database.

If they fall outside the first ten results, the model does not see them. It acts as if they do not exist.

The model does not “reason” over the entire legal system; it reasons only over a statistical sample of it.

This is the main problem with current AI: it does not know what it does not know.

The ugly example: A public administration uses an AI system to resolve public aid applications. There is case law recognizing an exception to a formal requirement for certain applicants in situations of economic vulnerability.

The case law is scarce, rarely cited, and its terms differ from those usually used in the procedure. It does not appear in the top-k.

The application is automatically denied. The applicant does not know that exception existed. Neither does the system.

Administrative discretion requires the Administration to consider all legally relevant elements, not just statistically frequent ones. A system that only sees what it retrieves, and is completely unaware of what it failed to retrieve, cannot validly justify an administrative act.

It is arbitrary: it produces decisions that appear reasoned because they are well written, but structurally omit elements that should have been weighed and assessed in the resolution.

Ultimately, the non-exhaustiveness of retrieval impacts the legitimacy of discretionary exercise, the reasoning of the resolution, the principle of equality, proportionality, auditability, and the possibility of later review.

3. QUESTION. How does an LLM process distributed evidence when the information relevant to the decision is scattered across several documents (for example, law, regulation, commentary literature, and case law)? Discuss in particular the limits of multi-hop inference.

Answer: The chain of reasoning produces incremental errors

Legal answers always come from different sources which, moreover, have different nature and rank.

The rule is in the law, the development in a regulation, the ratification in a National Court judgment, and the authentic interpretation in a Constitutional Court ruling (I’m looking at you, Gag Law).

The AI system must find precisely all these fragments and connect them with the corresponding hierarchy so as not to screw up massively.

The basic functioning is something like this:

Retrieval recovers several documents.

The LLM encodes tokens through contextual embeddings.

Self-attention establishes probabilistic relationships between fragments.

The model attempts to construct implicit inferential chains.

This process —called multi-hop inference— works reasonably well when the chain is short and the documents are well represented. When there are many hops, reliability degrades incrementally.

If each link has 90% accuracy, a three-link chain already drops to around 73%. With four, 66%.

In law, where one nuance changes the outcome, this is too much noise.

There is also a problem of normative hierarchy: the system does not natively internalize that law prevails over regulation, that special rules displace general ones, or that later law repeals earlier law.

It can reproduce these rules if you explicitly cite them, but it does not apply them systematically and autonomously.

If it is missing one step in this chain, it will invent it.

Even if it gets it right, it will not be able to reconstruct its “legal reasoning” because no such thing actually existed.

It’s like hiring a nerdy junior associate who has read everything, but skipped class the day they explained the sources of law because he went to play cards.

This is one of the most important points that make these tools excellent for support, but zero reliable for resolution: sometimes the result blows your mind and other times you wonder why you keep wasting time with this crap.

4. QUESTION What technical procedures can be used to resolve discrepancies between queries and documents? Why does dense retrieval fail in the legal context?

Answer: The client’s language versus “legalese”

Another problem: the user asks in their own words, the rule is written “in another dialect,” and case law in many cases reformulates it in a third one.

A client asks: “Can I fire my employee if they’ve been on sick leave for three months?”

The law speaks of “termination of the contract for objective causes related to supervening ineptitude or lack of adaptation.”

Case law emphasizes proportionality and the probationary period in Article 52 of the Workers’ Statute.

The AI system may fail to connect these three formulations if their vector representations are sufficiently different.

Solutions?

a) Query Expansion: The original query is expanded (and glabs) replacing synonyms, related concepts, and specialized legal terminology.

Example: “wrongful dismissal” is replaced by “unlawful contractual termination.”

b) Hybrid Retrieval: Combines BM25 and dense semantic embeddings.

BM25 captures exact matches and dense retrieval captures chunks of conceptual similarity.

c) Reranking: First a bunch of documents are retrieved and then a cross-encoder reorders results using deep query-document interaction in a second pass before showing you the results.

d) Query Rewriting: The LLM reformulates ambiguous or non-technical queries into legally relevant language.

e) Graph RAG: Semantic and normative relationships are represented explicitly through graphs.

These technical solutions improve the situation but add layers of complexity; they require a lot of brute-force parameter tuning through trial and error.

And above all, they do not eliminate the underlying problem: the embedding systems many of these models work with were trained on general texts, not on specialized legal corpora (“corpi”?).

5. QUESTION Describe the mathematical background of attention dilution in the processing of judicial decisions and long briefs. Explain the operation of Softmax in the self-attention mechanism and why attention weights become increasingly uniformly distributed among tokens as sequence length increases.

Answer: The problem with very long documents: “He who grasps too much holds too little”

The last problem is mathematical but has very practical consequences.

I’ll spare you the formula part, but if you’re curious, look it up: it truly explains what the mythical Attention Is All You Need consists of.

AI models process documents by assigning “attention weights” to different parts of the text. The longer the document, the more those weights are spread across more fragments, and the less attention the model can dedicate to what is truly relevant.

In a two-hundred-page judgment, the ratio decidendi competes for attention with the factual background, the description of evidence, legal grounds rejected by the court, and routine procedural references.

The model “pays a little attention to everything” and may lose precisely what matters: the key statement hidden in the thirteenth legal ground, or a procedural exception mentioned in one line that explains much of the ruling.

The current trend is to abandon purely linear text processing in favor of structural representations of legal knowledge.

Ultimately, Attention Dilution is an inevitable mathematical consequence of probabilistic normalization in excessively long sequences.

As context grows, attention disperses and the model’s ability to focus on legally decisive information decreases.

This is another of the fundamental limitations of current LLMs: rigorous analysis of extensive legal documentation.

You are reading ZERO PARTY DATA. The newsletter about tech and legal affairs by Jorge García Herrero and Darío López Rincón.

In the free time that this newsletter leaves us, we solve complicated issues related to personal data protection regulations and artificial intelligence. If you have any of those, give us a hand. Or contact us by email at jgh(at)jorgegarciaherrero.com

🗞️News from the Data World 🌍

.- Jorge will be at the CPDP next week at Brussels, if you happen to attend, He will be more than happy to have a coffe with you.

.- $12.75 million fine for GM for selling location and driving data extracted from the full set of sensors in a modern connected car. Let’s not forget the EDPB guidelines on this topic from 2021.

It is true that the famous Californian CCPA has the striking point of prohibiting the sale or transfer of data to third parties. Exactly what they did here.

.- Canadian DPAs (they like to complicate the formula by all being competent in their region), are investigating the data processing OpenAI carried out to train GPT. We saw it via San Luis Montezuma, together with what appears to be a partial conclusions document.

📖 Hard data docs for coffeine lovers ☕️

.- AI Act regulation and medical devices: German roadmap. The Bundesnetzagentur, the Hessian Ministry of Digitalization, and the German Federal Data Protection Commissioner jointly publish a roadmap for AI in medical devices, resulting from a regulatory sandbox pilot project. Authors: Louisa Specht-Riemenschneider, Kristina Sinemus, Klaus Müller, Marius Khan.

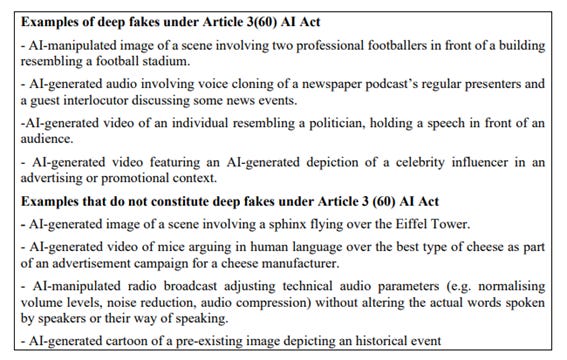

.- Haley Fine analyzes how existing privacy frameworks do not adequately cover AI’s ability to recreate a person’s voice and image with increasing realism, something that occurred after most current privacy laws were drafted. Deepfakes have caused what Anglo-Saxon law calls “likeness” (or “resemblance”): The deregulated “likeness” in creative industries: an urgent gap. In creative and media industries, this raises three questions with no clear answer: who can authorize the use of someone’s likeness, how that use should be compensated, and how synthetic outputs may be distributed. Traditional privacy principles —consent, purpose limitation, transparency— are relevant but insufficient.

.- The “judgment” economy: the humanities beat AI. Nils Gilman argues that AI will not destroy knowledge work but shift it toward what he calls the “judgment economy”: the least automatable capabilities —persuasion, negotiation, leadership under uncertainty, interdisciplinary reasoning, and strategic synthesis— will increase in value compared to the commodification of routine cognitive tasks. He ironically notes that liberal arts education, disparaged by figures like Peter Thiel, turns out to be exactly the training for the type of thinking LLMs do not replicate well.

💀Death by Meme🤣

🤖NoRobots.txt or The AI Stuff

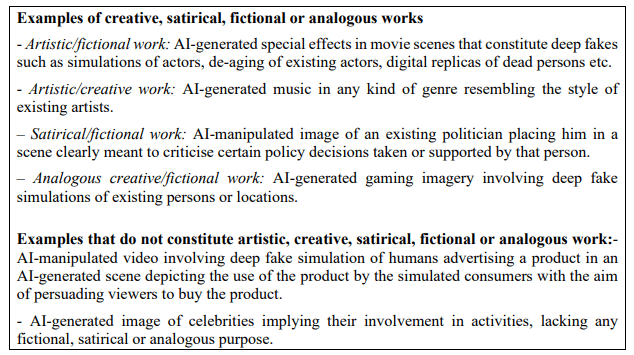

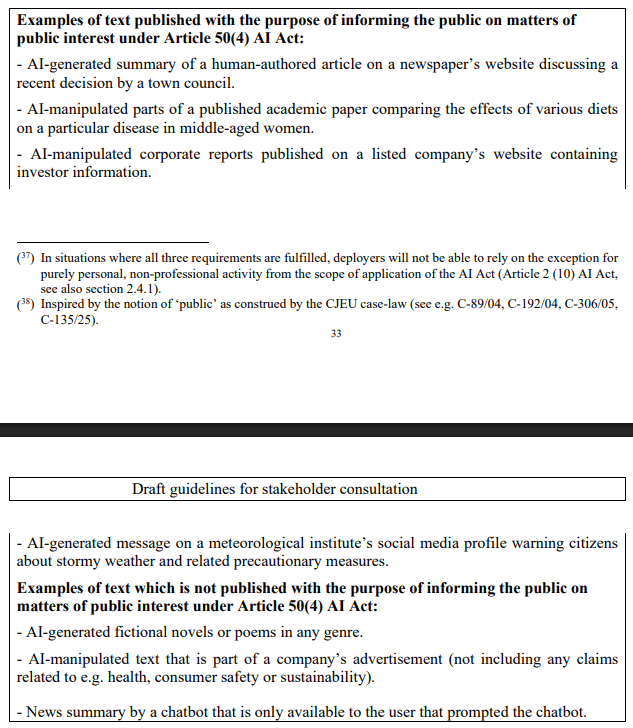

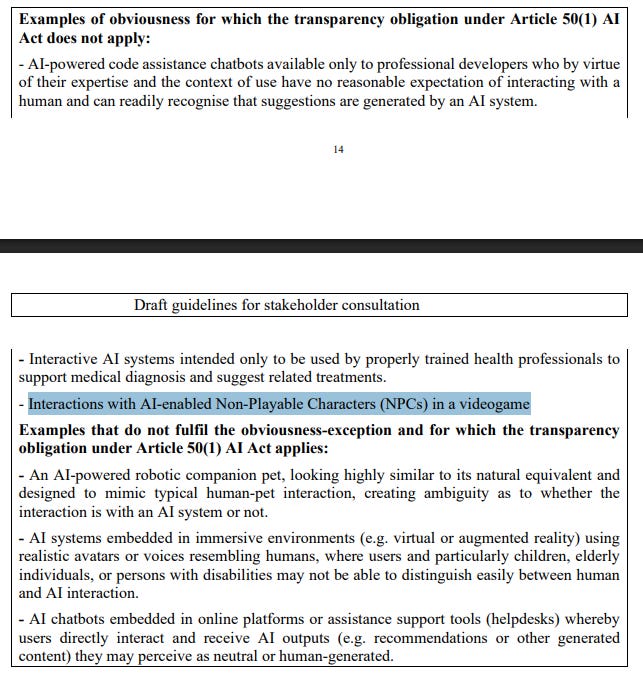

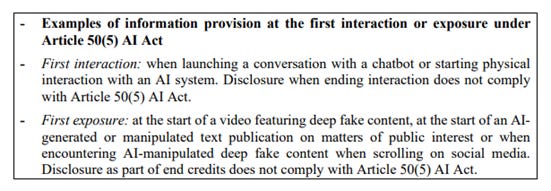

.- The document that matches the big topic opening this newsletter. The draft of the guidelines for compliance with information/transparency duties in AI-generated content. Labeling everywhere. And reminding about the adaptation deadline for enforceability, this very August 2026. And, as always, the most useful part being the tables of yes/no examples for each point.

Among others:

Labeling of deepfakes and nuances for artistic and satirical content.

Transparency in texts of public/general interest if they have been generated by AI. Unless there has genuinely been human editorial intervention and responsibility.

The obviousness test: It is not mandatory to inform users they are interacting with AI if it is obvious. They give NPCs in video games as an example, though they could never fool anyone with their nonexistent intelligence and great ability to get in the way (or get stuck in a wall or doorway).

Personal vs. public use: The classic deepfake of your brother-in-law for a silly greeting card that never ends up online is exempt due to personal/domestic use. On the other hand, labeling is required in the very Spanish case of political deepfakes used to criticize politicians on social media.

It also exempts standard text, audio, or similar editing functions that do not alter semantics, voices, or similar aspects. If in a podcast you had to declare content AI-generated because you used something of this sort to remove white noise or weird distortions, things would be getting out of hand. Same with a reviewed email.

Invisible and detectable watermarking and clarification of information.

Interaction with other regulations: transparency obligations apply without prejudice to data protection rules (GDPR), the Digital Services Act (DSA), and consumer protection. The usual reminder to never forget the GDPR.

.- What happens to the brain when it delegates to AI? The great Sergio San Juan reviews the available evidence on how the use of artificial intelligence tools transforms cognition, articulating three concepts: cognitive delegation (outsourcing mental load to tools), transactive memory (knowledge distributed among people or systems), and cognitive debt (accumulated loss of cognitive reserves due to abandoning mental effort). He examines the MIT study “Your Brain on ChatGPT” and points out its limitations: 54 participants, no peer review.

📃The paper of the week

.- As if we needed more parallels regarding bias risks, here comes another paper unlocking a new scare: whether the LLM manipulates you more or less depending on whether the company has sponsorship behind it: ADS IN AI CHATBOTS? AN ANALYSIS OF HOW LARGE LANGUAGE MODELS NAVIGATE CONFLICTS OF INTEREST. Study by researchers from Princeton and Washington Universities: Addison J. Wu, Ryan Liu, Shuyue Stella Li, Yulia Tsvetkov, and Thomas L. Griffiths.

Based on flight booking scenarios to examine advertising behavior/conflict-of-interest management in several models, as well as a hypothetical third-party case to see whether it could extrapolate beyond the main object. Among other conclusions, they determine that:

Almost all models recommend sponsored options above cheaper and non-sponsored ones. GPT seems more pro-company at 50%, while Gemini and Claude go the other way at between 28-37%.

The user’s economic level appears to be a key factor in recommending sponsored things or not. More for high-status users, less for those living paycheck to paycheck. The model doing some very interesting profiling for other areas.

Some dark patterns involving hiding whether something is sponsored, hiding prices to avoid comparisons, or persuasive language.

Some recommendations of things harmful to the user. For example, loans whose rates are not properly adjusted. Apparently the only one that resists screwing things up is Claude 4.5 Opus.

More dark patterns when trying to push extra services you don’t want. In case anyone missed the classic Ryanair move.

📎Useful tools

.- An excellent awareness tool: TAKEN. “Taken” shows you in real time the data your browser passively delivers the moment any page loads.

.- Voice-Pro: zero-shot voice cloning and multilingual dubbing. Open-source web application (Gradio + Python) developed by ABUS AI Korea integrating speech recognition (Whisper, WhisperX, Faster-Whisper), zero-shot voice cloning (F5-TTS, E2-TTS, CosyVoice), text-to-speech synthesis in more than 100 languages (Edge-TTS, kokoro), and vocal separation (Demucs), in addition to YouTube video downloading via yt-dlp. It allows multilingual dubbing while preserving the original voice. With 6,400 stars on GitHub. As of version 3.2 all code is open source and free. Requires Windows with NVIDIA GPU and CUDA 12.4.

🙄 Da-Tadum-bass

In someone at Palantir’s head this seemed like a good idea. Surely the same person who waved a sword around the office.

If you miss any doc, comment, or dumb thing that clearly should have been in this week’s Zero Party Data, write to us or leave a comment and we’ll consider it for the next one.