Generative AI: Transparency Obligations under the AI Act. Part Two: Image, Video and Audio (and Deepfakes)

Wax on! Wax off!

1 What and why

The AI Act regulates transparency as a fundamental pillar of technological governance, not only to prevent malicious deception of specific individuals or groups, but also to preserve the integrity of the global information ecosystem.

In other words, it seeks not only to protect individual rights, but also the functioning of the European political and social system.

Synthetic content is defined, in general terms, as any digital asset—whether text, image, audio, or video—generated or substantially manipulated by means of AI systems.

The transparency obligation is manifested through two operational dimensions: machine-readable marking and human-perceptible labeling.

· Marking refers to the integration of technical signals, such as encrypted metadata or digital watermarks, that enable other systems to automatically identify the artificial origin of a file.

· Labeling is directed at the perception of the end user, using visual or auditory indicators that inform them of the AI nature of the content at the moment of exposure.

Article 50 of the EU AI Act imposes the transparency obligation for general synthetic content (not deepfakes) primarily on technology providers, who must ensure that their systems “mark” outputs natively.

If you are interested in art 50 transparency obligations, you definitely should check out the first part of this series:

I promise you that the rest of the text is written quite seriously, but at this point I cannot resist paying tribute to Karate Kid, with the secret hope that it may serve as a mnemonic rule to remember that providers are required to mark (wax on) and deployers, to label (wax also on).

You’re reading ZERO PARTY DATA. The newsletter on current affairs, technopolies, and law by Jorge García Herrero and Darío López Rincón.

In the spare moments this newsletter leaves us, we specialize in solving complicated stuff in personal data protection. If you have one of those, give us a little wave. Or contact us by email at jgh(at)jorgegarciaherrero.com

Thanks for reading Zero Party Data! Sign up!

2 Article 50 of the AI Act

The European Union AI Act represents the first comprehensive attempt to codify these obligations at the international level.

The AI Act adopts a “risk-based” approach, classifying generative AI within the limited-risk category.

Accordingly, it imposes specific transparency requirements designed to foster trust and traceability.

2.1 Obligations for providers of generative AI systems

Article 50(2) provides that providers of AI systems—whether specialized or general-purpose (GPAI)—that generate synthetic audio, image, video (either deepfakes or not), or text content must ensure that outputs are marked in a machine-readable format.

This obligation is independent of the purpose of the generation or publication of the content: whether it is an artistic image generated for a blog or ambient audio for a video game, technical marking is mandatory.

The law requires these solutions to be “effective, interoperable, robust and reliable” to the extent permitted by the state of the art.

Accordingly, the provider must not only insert a mark, but that marking must also be resistant to attempts at removal or manipulation by malicious third parties.

In addition, providers must make available free detection mechanisms—such as APIs or public verification tools (“oracles”)—so that deployers and other users may verify whether a specific file was generated by their AI.

2.2 Exemptions

Without prejudice to the foregoing, the European AI Act has envisaged scenarios in which strict transparency could be counterproductive or unnecessary.

· AI systems that function as “standard editing assistants.” This includes tools that do not substantially alter the input data or its semantics, such as color correction tools in photography, audio noise reduction systems, or video stabilization tools.

· AI systems used exclusively for scientific research and development purposes.

· AI components distributed under free open-source licenses, unless such models present systemic risks.

· The well-known household exemption: purely personal and non-professional use. An individual who generates an image for private use is not subject to the same labeling obligations as a company distributing commercial content.

3 The EU Code of Practice and the dates on which all this will apply

Since the text of the AI Act provides legal principles but not technical specifications, the European Commission has published a Code of Practice on Transparency.

In March 2026, its second version was published, and it is the version discussed here.

This Code of Practice seeks to bridge the gap between the legal obligation and technical reality by establishing what is considered the “state of the art” with regard to the marking and detection of synthetic content.

Is this Code mandatory? Nope

The Code is not mandatory in itself: only for companies that subscribe to it.

Subscription to this Code neither presumes nor certifies compliance with the AI Act (organizations that do not sign it may demonstrate compliance by other means).

But in practice, the idea is that the Code will become the compliance standard in inspections conducted by the competent authorities.

The third version (the final one) of the Code is expected in June, and official Guidelines from the European Commission are also expected, but all of this will become known only a few weeks before the AI Act enters into force.

The problem is that, given the timetable, whether or not those documents arrive in time, it will be very difficult in practice to implement their new requirements in time for the applicable date: 2 August 2026.

Lastly, the AI Act will apply to AI systems placed into production from August 2026 onward. As regards those already on the market before then, two transitional periods are under discussion: three or six months from that 2 August date.

3.1 Multi-layered marking

The second draft of the Code acknowledges[1] (watch this carefully) that the state of the art does not make it possible to comply with all the requirements laid down in the AI Act by means of a single method or tool, and for that reason it proposes that providers should not limit themselves to a single marking technique, but should instead implement a multi-layered approach to ensure the resilience of the system.

This approach is divided into three fundamental levels:

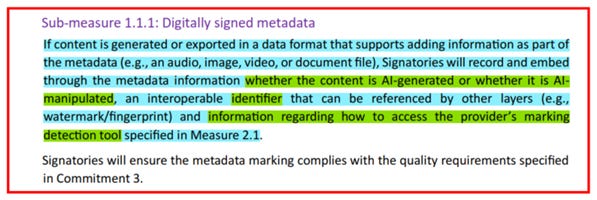

Metadata integration: This consists of inserting provenance information and digital signatures directly into the file header (such as XMP or IPTC tags). Although this is the easiest technique to implement, it is also the most vulnerable, as many image editors and social media platforms automatically strip metadata when processing files.

Imperceptible watermarks (Watermarking): This technique involves embedding digital signals directly into the pixels of an image or into the frequencies of an audio file. These marks are invisible or inaudible to humans, but remain even if the file is compressed, cropped, or converted into another format. The Code suggests that these marks be integrated both during model training and during the inference (generation) phase.

Fingerprinting and output logging: As a final layer of security, providers are expected to maintain a database or log of the hashes of generated content. This makes it possible, if content loses its metadata and watermark, for a detection system to identify it by comparing its hash or unique “fingerprint” against the provider’s records.

The Code requires signatories (providers, deployers) to

(i) refrain from removing or altering this marking; and

(ii) impose, through acceptable use policies (or information, in the case of open-source models), the same obligation on downstream third parties.

3.2 Quality requirements and technical performance

Compliance is not based only on “doing it”, but on doing it effectively.

The Code provides that detection solutions must demonstrate low levels of false positives and false negatives.

Providers must subject their tools to independent evaluations and verified benchmarks in order to demonstrate that their solutions meet the performance standard required by the AI Office.

4 Deepfakes: Mandatory labeling

A deepfake, within the meaning of the AI Act,[2] is image, audio, or video content generated or manipulated by AI that appreciably resembles existing persons, objects, places, entities, or events, and that a reasonable person could falsely perceive as authentic or truthful.

All deepfakes (either image, video, or audio) must be labelled in a clear, distinguishable, and accessible way no later than the moment of the user’s first exposure to the content.

The Code of Practice establishes specific requirements depending on the modality.

• Main visual element: the acronym “AI” in uppercase, optionally accompanied by text such as “Generated with AI,” “Made by AI,” or “Manipulated with AI.”

• Minimum contrast ratio: 4.5:1 between the label and the background.

• Portability: to the extent technically feasible, the label must “travel” with the content when it is redistributed.

• Accessibility: alternative text for screen readers, tactile signals for audio-only content (where the device permits), compatibility with assistive technologies.

5 Specific rules

5.1 Image

For images, the icon or label must be displayed consistently from the first exposure and at each subsequent exposure. The label must be clearly distinguishable from the image itself: prominent, not hidden within layers of the image, not too close to other icons or text, and not displayed against backgrounds that reduce its visibility.

5.2 Live video (live broadcasting, livestreaming)

For live video, the icon must be displayed consistently throughout the entire exposure, where feasible. If the disclosure is also made by audio, it must be presented simultaneously with the visual icon. Alternatively, a visual or auditory notice may be used at the beginning and at regular intervals during the broadcast.

5.3 Pre-recorded video

• Long videos: icon or label at least at the beginning, repeated at regular intervals (for example, each time the deepfake content appears or after advertising breaks).

• Short videos: icon or label visible consistently throughout the duration. If the video is entirely generated or manipulated by AI, this must be indicated throughout playback.

• Credits: a notice may be included in the end credits, but this must always be accompanied by one of the preceding options. Credits alone are not sufficient.

5.4 Audio

• Audio under 30 seconds (advertisements, commercial spots): at a minimum, a brief audio notice at the beginning of the content.

• Longer audio content (podcasts, AI-generated calls, radio broadcasts): repeated audio notices at the beginning, at appropriate intermediate stages, and at the end of the content.

• Language: the notice must be given in clear and simple natural language, in the same language as the content.

• Audio with a visual interface (playback on a mobile phone screen, in a car, etc.): in addition to the audio notice, an icon or visual label must be displayed on the interface elements under the deployer’s control.

5.5 Multimodal content

For content combining several modalities (image-text-audio, image-audio, text-audio, image-text), disclosure must be made by means of an icon or label that is clearly perceptible without any additional interaction on the part of the user.

6 Excpetions

6.1 “Non-exceptions”

· The “editorial exemption” does not apply to deepfakes.

· Mandatory even where the “cloned” person has given explicit consent.

6.2 Exceptions

Use authorized by law for the detection, prevention, investigation, or prosecution of criminal offences. This exception has very short legs in the private sector.

Artistic and creative works

For deepfake content forming part of an evidently artistic, creative, satirical, fictional, or analogous work, the transparency obligation (labelling) is mitigated, but it does not disappear.

Disclosure must be made in a way that does not impair the exhibition or enjoyment of the work. The options include:

• Live or near-live video: icon in the upper or lower corners for at least five seconds at the beginning (for example, during the opening credits), without the need to repeat it during the remainder of the exposure.

• Pre-recorded video: disclosure for a sufficient duration at the beginning, without interfering with the experience.

• Image: label at the beginning of the first exposure, which may be integrated into the image or its background provided that the user can distinguish the labeling.

• Audio: audio notice at the beginning of the first exposure.

• Non-digital contexts (exhibitions, galleries, festivals): disclosure may be made at the point of entry or upon ticket sale.

Jorge García Herrero

Lawyer and Data Protection Officer

[1] Strong words in the Code: “So long as no single marking approach is sufficient, under the state of the art, to comply with the four requirements in Article 50(2) AI Act of effectiveness, interoperability, robustness and reliability, Signatories will implement a multi-layered marking approach to ensure that the outputs of their generative AI systems are marked with at least two layers of machine-readable active marking, as specified in the sub-measures below” (p. 10).

[2] Article 3(60) of the AI Act