Generative AI: Transparency obligations. Part one: text.

You may be interested in reading this one

As of August 2026, the AI Act requires marking and labeling (they are two different things) content generated with AI.

We are not going to get into today the changes to the entry-into-force date for new systems / in uso systems resulting from the various omnibus packages (omnibus is already a plural noun in latin) on the table.

At the beginning of March, the second version of the Code of Good Practice on Marking and Labeling AI-Generated Content from the European Commission was published, which is the one we take into account in this post.

This code also refers to image, video, audio and deepfakes, but today we are starting by talking about text: a topic that barely matters given that almost nobody uses AI today to help them write.

You’re reading ZERO PARTY DATA. The newsletter on current affairs, technopolies, and law by Jorge García Herrero and Darío López Rincón.

In the spare moments this newsletter leaves us, we specialize in solving complicated stuff in personal data protection. If you have one of those, give us a little wave. Or contact us by email at jgh(at)jorgegarciaherrero.com

Thanks for reading Zero Party Data! Sign up!

1. But I am not OpenAI or one of the magnificent seven... Does this apply to me?

This post will be useful then, not for you, since you are not caught by it, but for anyone who, in the exercise of their functions, uses generative artificial intelligence tools to produce, rewrite or edit texts intended:

(i) To be published in the name of their organization.

(ii) On matters of “public interest”.

This includes, among other examples, the drafting of press releases, market reports, regulatory analyses, content for the corporate blog, social media posts for informational purposes, and any other text intended to inform the public about matters of public interest.

The concept of “public interest” is not defined in the AI Regulation or AI Act.

The interpretative guidelines of the European Commission are expected for the second quarter of 2026 and may not arrive until just a few weeks before the deadline.

A prudent criterion would be: if the text aims to inform and is directed at the general public or a relevant segment of it, it is presumed to fall within the scope of application.

If generative AI is used to produce text that is going to be published externally for informational purposes, that text must be labeled as AI-generated, unless the conditions of the editorial exception are met.

2 What needs to be done? Label the text

When publishing text generated or manipulated with AI on matters of “public interest”, its synthetic origin must be disclosed clearly and distinctly, so that the reader recognizes it as such when accessing the content for the first time.

The Code of Practice establishes specific requirements for this disclosure:

2.1 Labeling format

• Icon or visual label: the main element will be the acronym “AI” in uppercase, optionally accompanied by a short text such as “Generated with AI”, “Made by AI” or “Manipulated with AI”.

• Contrast ratio: minimum 4.5:1 between the label and the background on which it is displayed.

• Location: in a fixed position, for example above or at the beginning of the text, next to the headline, or in the opening colophon. The key point is that it is perceptible before the reader begins reading.

• Partially generated texts: if only a part of the text has been generated or manipulated by AI, it is enough to label that specific part.

• Very short texts: for short texts where a label would degrade readability, a contextual notice in the interface may be used instead (for example, an indicator next to the output or a note at page or session level).

2.2 Accessibility

The label must be accessible for people with disabilities: compatible with screen readers, with sufficient contrast for people with color vision deficiencies, and detectable by assistive technologies.

At minimum, the criteria of the WCAG 2.1 level AA standard must be applied.

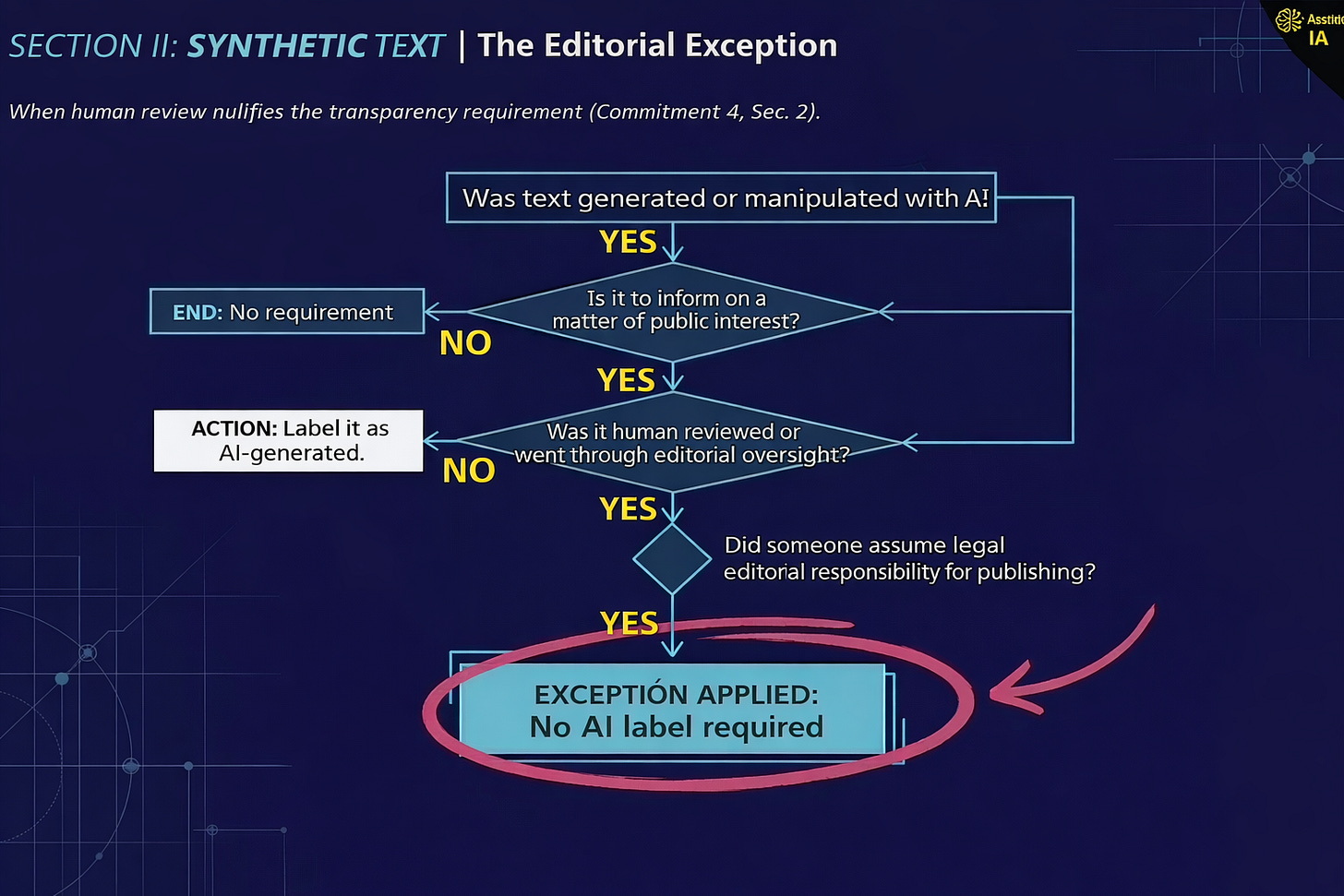

3 Can this labeling be avoided?: The “editorial exception”

Article 50(4) of the AI Act establishes that the labeling obligation does not apply when AI-generated content:

i. Has been subjected to a human review process or editorial control, and

ii. A natural or legal person assumes editorial responsibility for the publication.

Consequently, relying on this exception requires meeting specific and documented requirements:

• Identified editorial responsible party: The name, position and contact details of the person (natural or legal) who assumes editorial responsibility must be published. These details must be publicly accessible.

• Documented review process: the organization must document the organizational measures and human resources assigned to ensure that effective human review is carried out before publication.

• Formalized workflow: it is not enough to “document” that someone “must read the text and take responsibility for it before publishing”.

A documented procedure is needed, with identified responsible parties and traceability of the process.

A documented procedure is needed, with identified responsible parties and traceability of the process. If this process is not followed, the text must be labeled.

4 Consequences of non-compliance

The AI Regulation or AI Act provides for significant penalties for non-compliance with transparency obligations.

Art 99 AI Regulation: up to €15 million or 3% of the total worldwide annual turnover of the financial year preceding the infringement, whichever amount is higher.

Although the Code of Good Practice is not binding on the authorities (it does not presume compliance with the rule), those who do not subscribe to it must prove by other means that they comply with the same obligations of Article 50 AI Regulation.

In practice, the Code will function as the reference standard for inspections by the competent authorities.

Jorge García Herrero

Lawyer and Data Protection Officer.